We are rapidly approaching a reality where synthetic media output actually outnumbers authentic human information. UNESCO describes this as a crisis of knowing where traditional epistemological mechanisms break down completely. Nowhere is this vulnerability more urgent than in the cryptocurrency sector. In the crypto market, deepfakes are currently fueling a massive surge in AI-enabled financial fraud.

Scott Stornetta's 1991 work on timestamping digital documents laid the foundation for modern blockchain tech. He is now applying those same principles to decentralized digital identity verification to combat this exact threat. Rather than relying on an endless cycle of better detection tools, this approach uses Web3 infrastructure to make deepfakes irrelevant. Stornetta is actively implementing these systems by cryptographically signing video content and leveraging decentralized identifiers.

The Crypto Noise-to-Signal Inversion

"What we're seeing with the abundance of information is we're going to see a world not far away where it's no longer a signal-to-noise ratio, but a noise-to-signal ratio," Scott Stornetta explained during a recent interview with Binance's Jessica Walker. Accurate information was once the baseline. We are now inverting to an environment where manipulated content is the default.

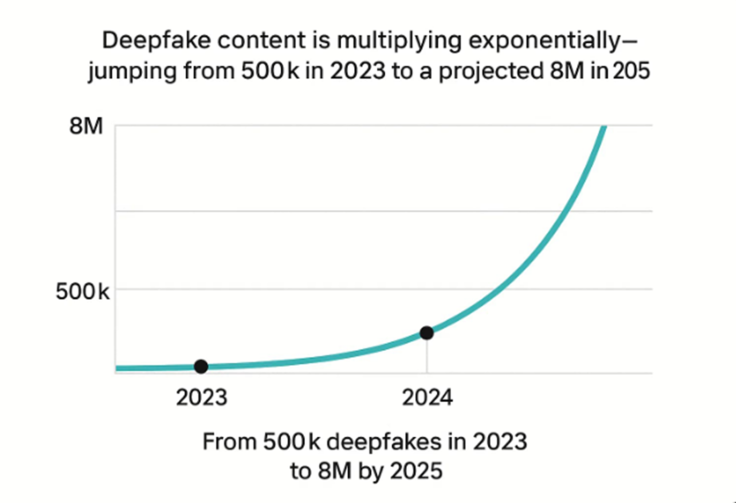

This shift is severely impacting the digital asset space. Data from DeepStrike indicates an approximate 900% annual increase in overall deepfake volume, while recent industry reports reveal a 500% year-over-year surge in AI-enabled crypto scams. Scammers are exploiting this technology to industrialize fraud across the blockchain sector. Criminals deploy live deepfakes to impersonate cryptocurrency exchange executives during video calls. Deepfakes and AI help them bypass security protocols—authorizing fraudulent wire transfers or steal credentials (like for crypto wallets).

The financial impact is staggering. In 2025 alone, scam-related activity accounted for an estimated $30 billion in illicit cryptocurrency volume. Malicious actors use generative AI to automate financial grooming schemes, creating synthetic trust at an industrial scale to drain users' digital wallets before victims realize the deception. Dr. Nadia Naffi from UNESCO notes that deepfakes do more than introduce falsehoods as they erode the very mechanisms by which societies construct shared understanding.

Trying to detect every synthetic file has become mathematically futile. "When that signal-to-noise ratio turns into a noise-to-signal ratio, we very much need a higher assurance standard that doesn't try so much to fight the deep fakes as to make them irrelevant," Stornetta added.

Decentralized Identity as the Architectural Solution

"Just as we created a system where trust was so distributed across so many people, that we didn't have to have a trusted third party, the question in my mind has been the last couple of years," Stornetta said. "Is there a way to use similar principles of distributing that trust broadly so that even if you can't trust your eyes, you can trust that the person you think you're interacting with is in fact that person."

The technical approach relies on Decentralized Identifiers (DIDs) and Verifiable Credentials (VCs). A DID resides on the blockchain and functions as a globally unique, user-owned digital passport secured by public key cryptography rather than a centralized registry. VCs act as tamper-proof digital files stored within this framework, providing irrefutable proof of a user's identity or attributes.

By relying on cryptographic proof rather than visual confirmation, this Web3 infrastructure solves the identity crisis created by synthetic media. When a video or communication is signed using the private key associated with a verified DID, viewers can confirm human origin instantly. Once stored on a public ledger, nobody can alter that record. This aligns withWorld Economic Forum recommendations for building knowledge ecologies, creating environments where verifiable mechanisms generate resilient understanding and secure digital identities against synthetic spoofing.

From Concept to Implementation

The building blocks for this architecture already exist. TheCoalition for Content Provenance and Authenticity has developed technical specifications to establish industry standards for media provenance. Implementation is accelerating at a national scale.

Bhutan taps into Ethereum and W3C standards to re-launch the country's digital identity platform. This blockchain-based solution allows citizens to cryptographically prove their attributes without centralized databases. Examples here include their age, residency, and citizenship. The platform's Ethereum migration is expected to take place early this year.

The World Economic Forum advises organizations to implement infrastructure-level protections including camera path verification and temporal consistency monitoring to combat deepfake-driven identity fraud. Crypto companies are also adapting to this verified environment. Binance recently adopted a Responsible AI Framework that aligns with global standards. It also secured ISO 42001 certification for AI management. This covers the full lifecycle of automated systems and anti-fraud controls.

The takeaway? The broader tech sector is moving toward verified and governed digital ecosystems.

The Indiana Jones Solution

Stornetta compares the current panic over synthetic media to a famous scene in Raiders of the Lost Ark. The narrative builds intense tension about how Indiana Jones will defeat a massive and sword-wielding giant in the marketplace. But instead of engaging in a complex sword fight Indy simply pulls out his gun and ends the encounter.

We face a similar buildup regarding how the cryptocurrency industry and society will cope with increasingly deceptive videos and AI-driven scams. The answer is simply to make them irrelevant and move on. Instead of engaging in an endless detection arms race, cryptographic proof of origin and decentralized identifiers render the entire contest unnecessary. Proving authentic content at the point of creation is far more efficient than hunting down every fabrication across the internet.

The Underlayer That Proves Itself

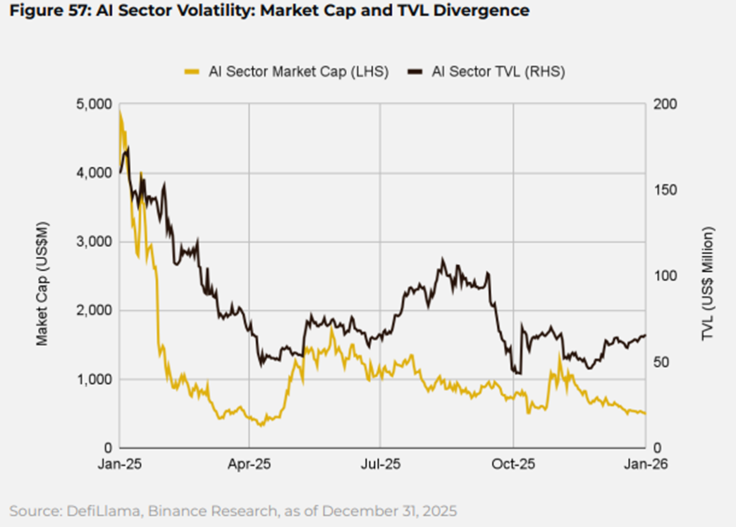

From timestamping digital records in 1991 to current blockchain-based identity applications, the core principle remains consistent. Trust must be distributed so broadly across Web3 networks that no single point of failure exists. Binance Research recently noted that the fusion of AI and blockchain enhances security and efficiency across global finance—particularly as institutions adopt these new frameworks.

The proliferation of synthetic content in the crypto market means that systems designed to prove authenticity through decentralized identifiers will become essential infrastructure. The future of digital interaction no longer depends on spotting the fakes. It relies instead on an invisible, cryptographic technical underlayer that permanently proves what is real.