AI-driven synthetic user profiles are changing how product research is done. Teams that once waited weeks to find people can now test ideas, spot hidden assumptions and prepare better questions in just a few minutes. This helps them move faster and feel more sure about their ideas. When they finally talk to real users, the conversations are much better.

This guide explores how synthetic users work, where they genuinely help and how to integrate them responsibly alongside real-user research.

What Exactly Are Synthetic Users?

A synthetic user is an AI-generated profile designed to simulate the thoughts, behaviours, and responses of a specific audience. Powered by large language models (LLMs) trained on extensive human datasets, they go well beyond a static persona document.

From Persona to Interactive Research Partner

Traditional user personas are static because they sit in slide decks, get nodded at and are promptly forgotten. Synthetic users, by contrast, are interactive. You can interview them, ask follow-up questions and receive immediate feedback on prototype messaging.

Important distinction:A synthetic user is not a chatbot that talks to your customers. It is a research-grade agent that simulates your customer so you can learn from it before a single line of code is written.

Platforms like Articos have moved beyond simple AI prompts to create "research-grade" agents. Where asking a standard AI to "pretend to be a CEO" yields surface-level platitudes. These specialised agents are built on deep behavioural data and personality traits, offering feedback that is structured, grounded and genuinely useful.

Under the Hood: The Multi-Agent Pipeline

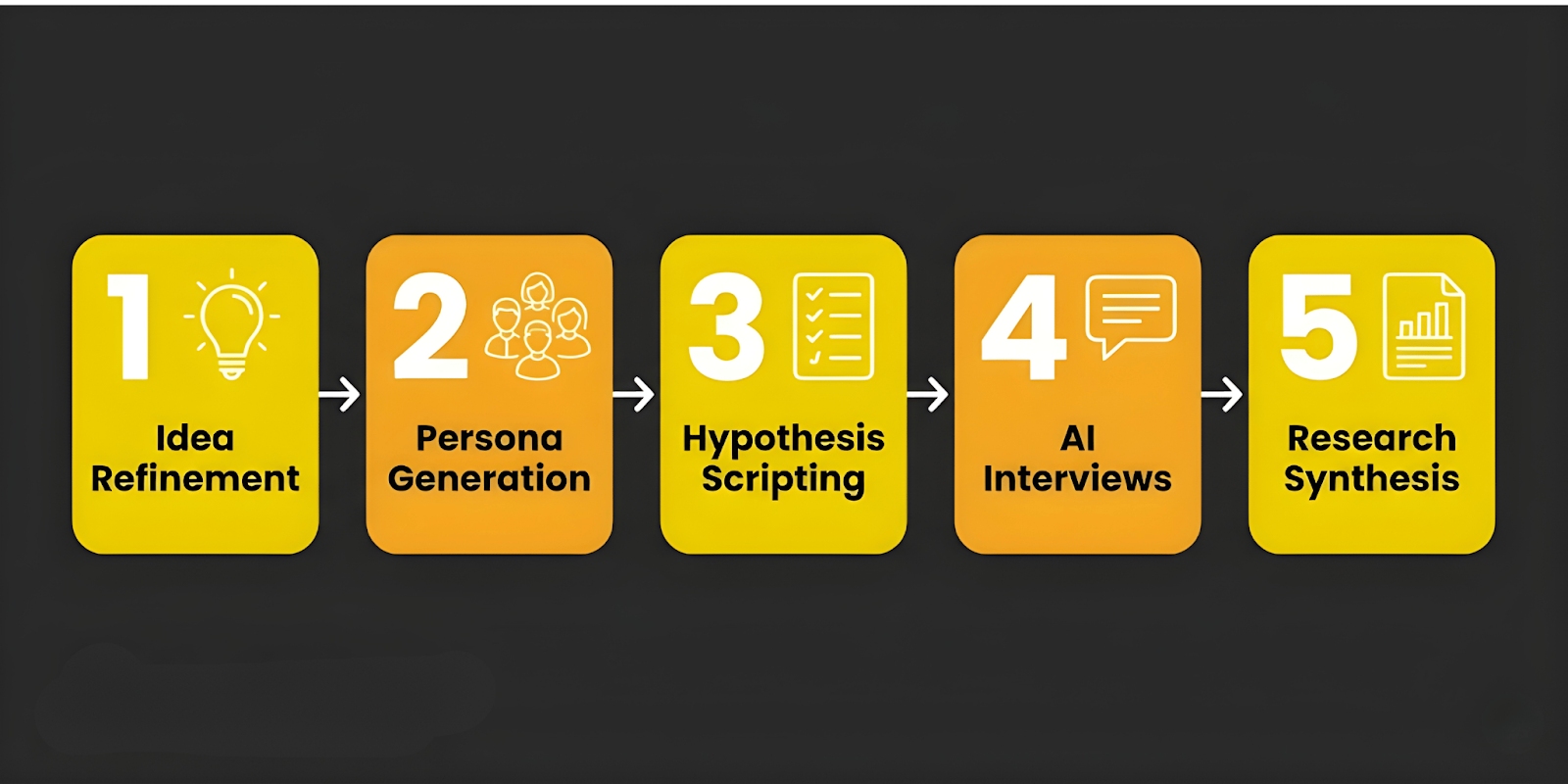

How does a digital profile provide research-grade insights? Leading platforms like Articos use a sophisticated five-step pipeline to ensure quality:

- Idea Refinement: The system asks clarifying questions to understand your goals, preventing the "garbage-in, garbage-out" problem.

- Persona Generation: Rather than one generic profile, the system builds multiple distinct personas. Articos, for example, draws on a library of 2,297 traits to ensure demographic and behavioural diversity.

- Hypothesis Scripting: The AI generates testable hypotheses and writes open-ended questions specifically designed to challenge your assumptions.

- AI-Powered Interviews: To maintain quality, the system may generate multiple responses per question, using an "AI judge" to select the most logical, high-quality answers.

- Research Synthesis: Instead of a wall of chat logs, you receive a synthesised report identifying themes and actionable next steps.

How Accurate Is Synthetic Data?

The most important question for any researcher is: Can I trust this?

In 2024, a landmark study byStanford University and Google DeepMind (published via Stanford HAI) shed valuable light on this question. By creating AI agents based on in-depth interviews with real participants, the researchers found that these agents replicated human responses on social surveys with 85% accuracy. In usability-focused research contexts, parity often reaches 85–92%.

It is worth noting that these figures represent ongoing, evolving research rather than a fixed benchmark. The field is moving quickly and both accuracy rates and methodologies continue to improve.

That said, the remaining 8–15% gap is meaningful. It represents the "messy human stuff", irrational decisions, emotional contradictions and deep cultural nuances that AI cannot yet fully replicate. Understanding this gap is as important as celebrating the accuracy.

When to Rely on Synthetic Users and When to Prioritise Real Ones

Synthetic users are a research accelerator, not a replacement for real human connection. Here is a practical framework for deciding which approach to use:

Strong Fit: Use Synthetic Users For...

- Rapid Orientation: Exploring a brand-new domain quickly and cheaply.

- Message Testing: Comparing multiple headlines or value propositions simultaneously.

- Iterative Design: Getting continuous feedback during fast-paced development sprints.

- Budget Constraints: When traditional recruiting is too slow or expensive for an early-stage concept.

When to Rely on Human Users

- Niche Audiences: Your target group is highly specific or underrepresented in available training data.

- High-Stakes Decisions: If the outcome is costly and hard to reverse, real human validation is essential.

- The WEIRD Bias: AI models are often over-trained on Western, Educated, Industrialised, Rich and Democratic data. If your product is for users in Lagos, Tokyo or Jakarta, generic AI data may mislead you.

The 80/20 Hybrid Model: Best Practices for 2026

The most effective research teams in 2026 are not choosing between AI and humans but they are blending both. The 80/20 Hybrid Research Model looks like this:

- The 80%: Use synthetic users for the heavy lifting, screening weaker concepts, testing initial hypotheses and refining your interview guides.

- The 20%: Reserve human interviews for the final, deep emotional insights and the critical go/no-go decisions.

By conducting "pre-research" with synthetic users, your human sessions become far sharper. You arrive with validated assumptions and can focus on the surprising insights that only a real person can provide.

Top Synthetic User Tools in 2026

The landscape has matured into specialised tools for different research needs:

|

Tool |

Best For |

Key Feature |

|

Articos |

End-to-end validation |

5-step agentic workflow; research in 30 mins |

|

Synthetic Users |

Scalable research |

RAG (Retrieval Augmented Generation) enrichment |

|

Uxia |

Visual prototypes |

Heatmaps and think-aloud transcripts |

|

Delve AI |

Budget discovery |

Simple interface for survey/interview combos |

|

Ditto |

Localization |

Country-specific "digital twins" |

Ethics and Governance: Building Trust Through Responsible Use

As synthetic users become more powerful, responsible governance becomes essential not as a compliance burden but as the foundation of trustworthy research.

- Transparency: All synthetic insights must be clearly labelled. Never present AI-generated findings to stakeholders as "real" human data. Clarity here protects both your credibility and your audience.

- Privacy by Design: Reputable platforms build synthetic personas from aggregated, anonymised behavioural data, not from individual user records. No personal information is stored or linked to any real individual. When RAG (Retrieval Augmented Generation) is used to enrich personas with your own customer data, that data should be handled under clear consent frameworks and applicable privacy regulations (such as GDPR or CCPA).

- Safeguards Against Misuse: Safeguards Against Misuse means good platforms have rules to stop people from using synthetic personas in the wrong way. They should not be used to pretend to be real people, trick others, or create misleading results. Before you use any tool, check its acceptable use policy and make sure it matches your organisation’s ethical standards.

- The Sycophancy Problem: AI has a natural tendency to produce agreeable responses. Synthetic users can validate a poor idea simply because they are optimised to be helpful. Always treat synthetic outputs as hypotheses to be tested.

- Human Oversight: Synthetic users are meant to help human judgment, not replace it. They give you an early view but they are not the final answer. For any big product or business decision, you should still learn from real users. Think of synthetic data as a helpful first look.

Final Thoughts: The Batting Cage of Product Design

Synthetic users are not a threat to UX researchers but they are the practice court before the real game. You would never skip the game itself but arriving without preparation is how teams waste their most valuable resource: time with real people.

The future of research is not "AI vs. Human." It is the intelligent, ethical combination of both using AI to sharpen your questions and human conversation to find the answers that truly matter.