- Spotify has announced a new music approval system called Artist Profile Protection

- It allows artists to approve or decline music releases before they appear on their profile

- The new system aims to prevent fraudulent streams and AI impersonation

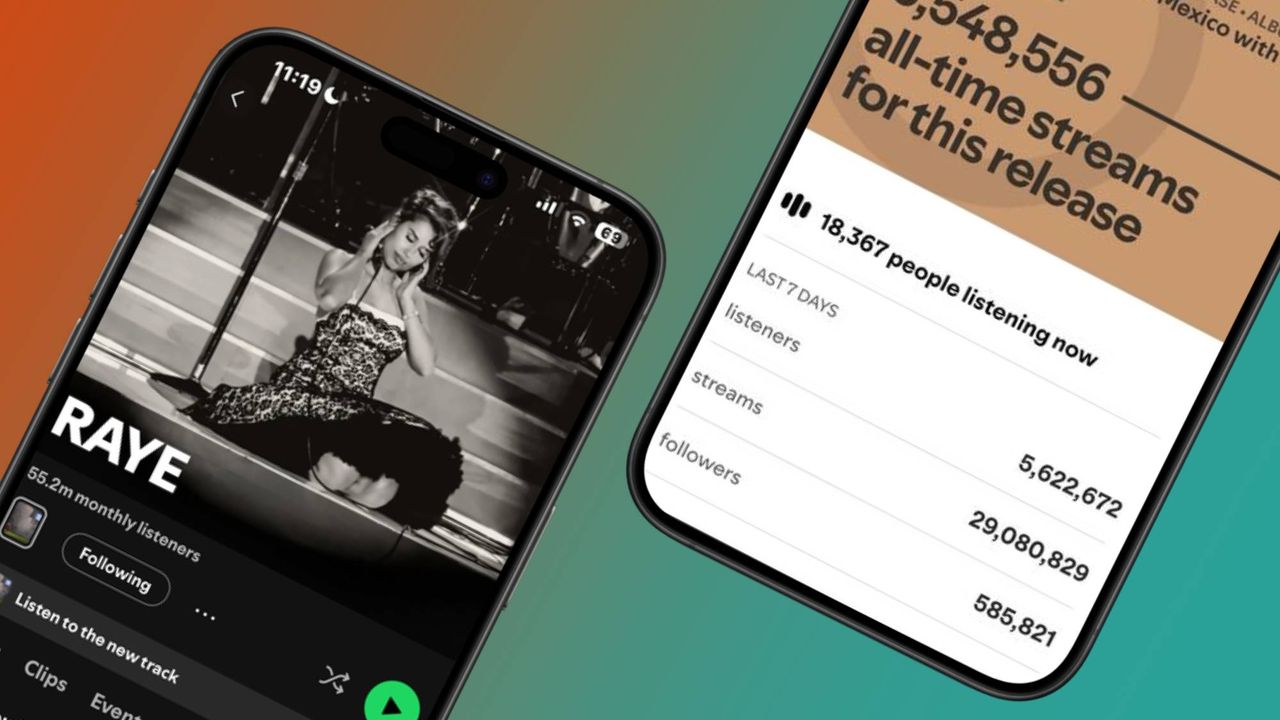

Spotify has had its fair share of scrutiny from loyal, yet frustrated subscribers who are tired of AI-generated music plaguing Discover Weekly and Release Radar — and now the music streaming platform is taking its first big step in conquering this with a ‘first-of-its-kind solution’.

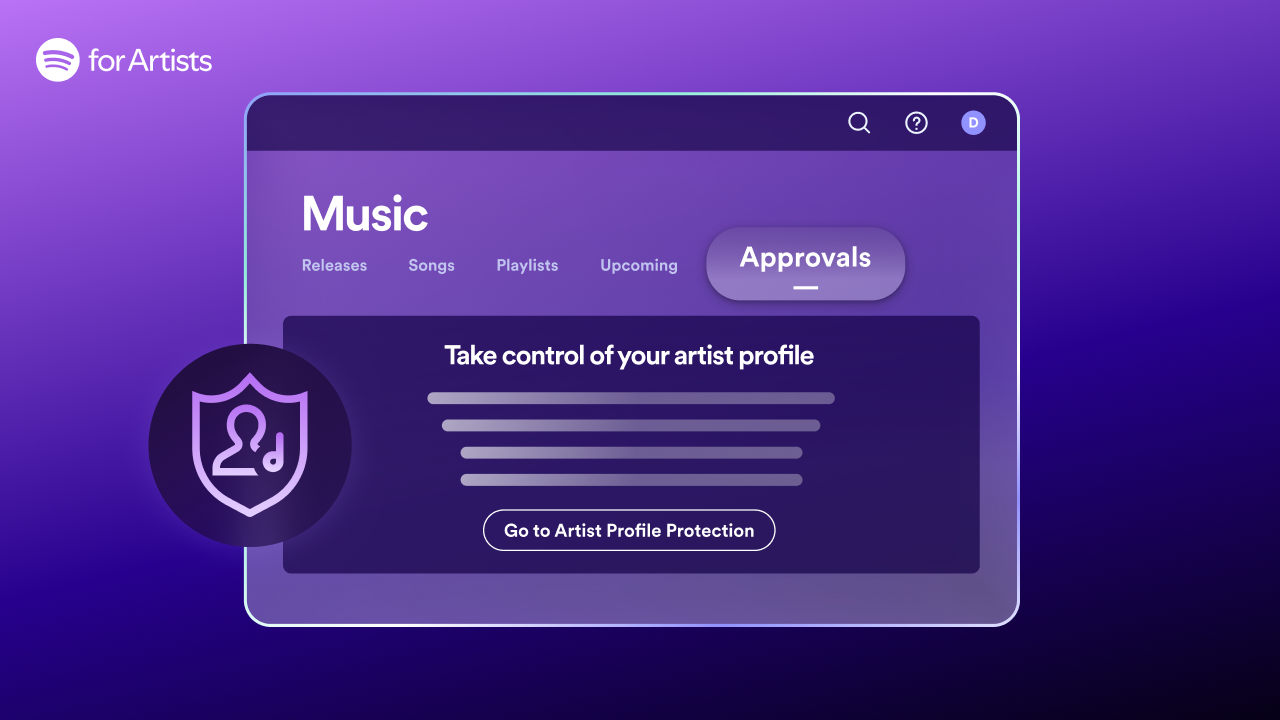

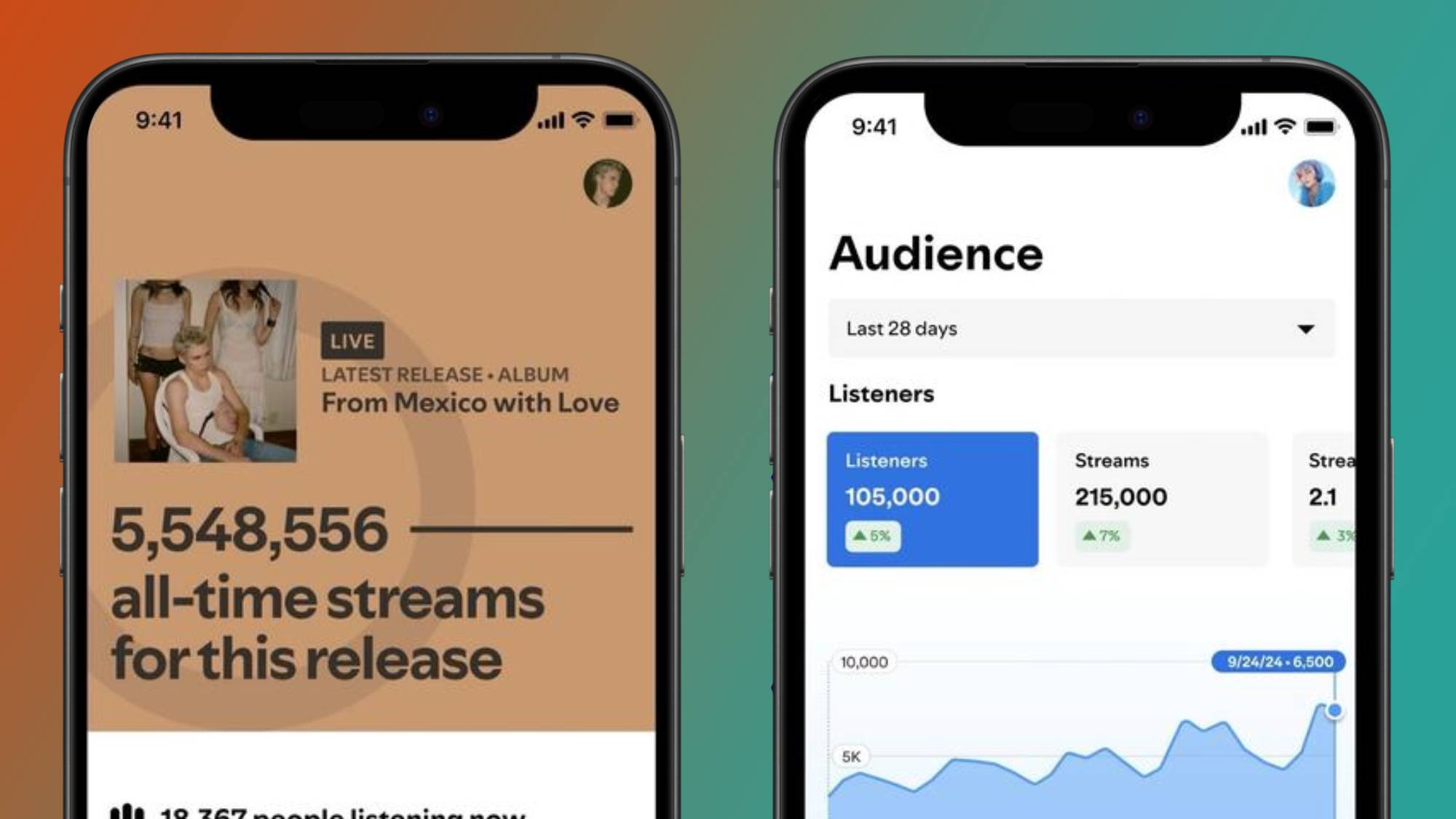

Rolling out in beta today (March 24), Spotify has announced Artist Profile Protection, a new opt-in system that puts artists in direct control over what new music appears under their name and profile. Essentially, it’s an approval stage that allows artists to review eligible music releases before they're uploaded to Spotify, shielding them from AI impersonation and ensuring that listeners are streaming legitimate music.

“Music has been landing on the wrong artist pages across streaming services, and the rise of easy-to-produce AI tracks has made the problem worse,” says Spotify in its announcement. “That’s not the experience we want artists to have on Spotify, and that’s why we’ve made protecting artist identity a top priority for 2026”.

Though Spotify has encouraged subscribers and artists to use its reporting resources to flag AI-generated music, this marks the first time where the company gives the artist an active role in preventing AI fraud as well as avoiding common mix-ups in the release process. It follows Apple Music’s ‘Transparency Tags’ announcement, a system that leaves the responsibility of disclosing AI music to labels and distributors, which doesn’t necessarily guarantee 100% transparency. In the case of Spotify, it works slightly differently.

How Artist Profile Protection works

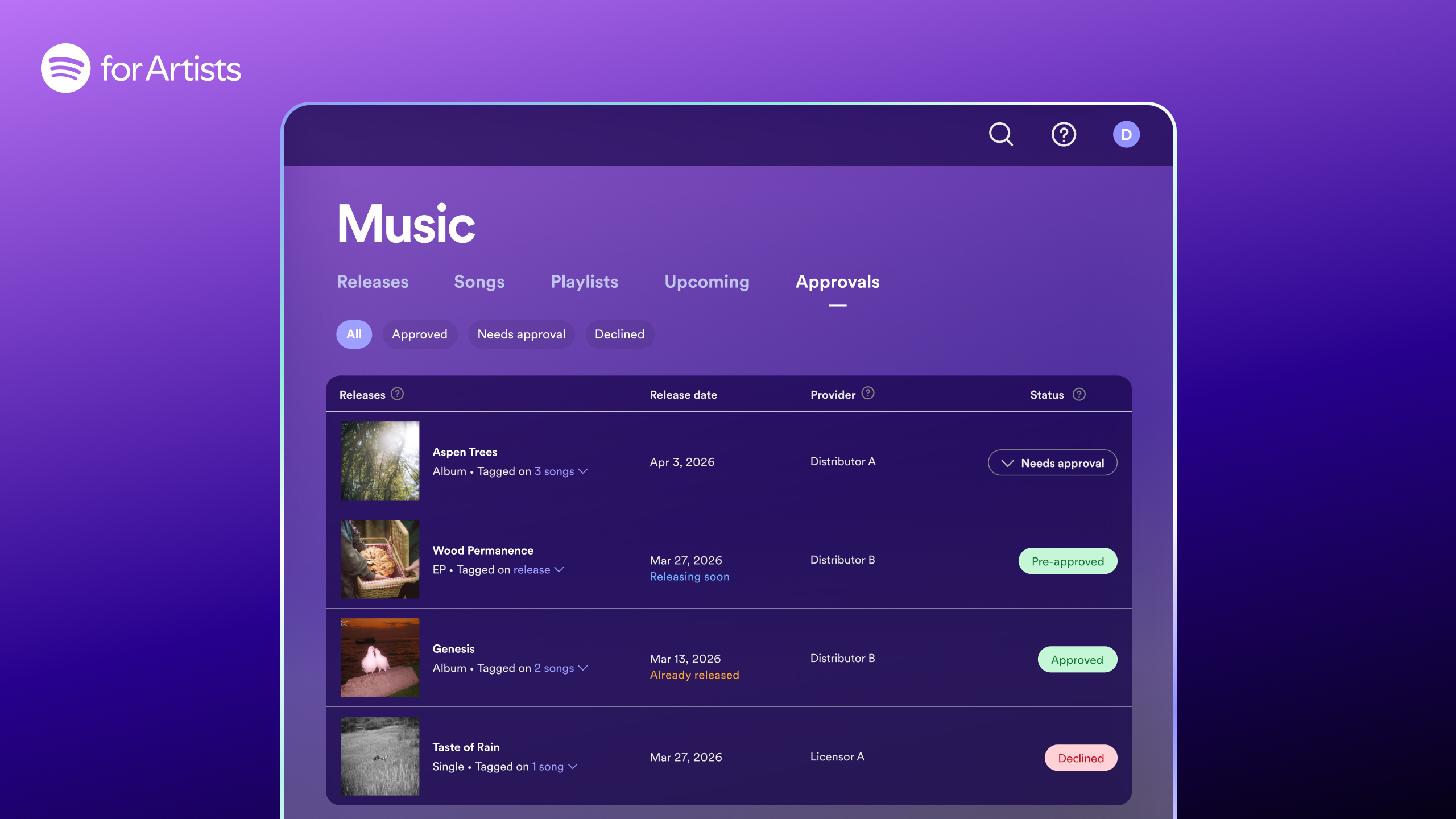

If a musician enables Artist Profile Protection in the Spotify For Artists settings (Artist Team Admins and Editors have the ability to manage this), they’ll receive an email notification when music is sent to Spotify in their name. This is when they can review music and choose whether to approve or decline its release.

If an artist approves, it will be uploaded as normal, contributing to artist stats and recommendations for listeners. If declined or no action is taken, it won’t appear on your profile, but it may still go live on other platforms besides Spotify. With that in mind, Spotify recommends notifying the distributor. However, there’s a way for artists to bypass this process.

When the feature is enabled, artists will be given what Spotify is calling an ‘artist key’, which is essentially a unique code musicians can share with trusted music distributors. So, when music is delivered to Spotify with the artist key attached, the release will automatically be approved. The company goes into further detail on Artist Profile Protection functions on the Spotify For Artists page.

Tightening the screws

Until now, it’s been easy for fraudsters to upload AI-generated music to Spotify to impersonate bigger artists and steal royalties through streams. While Artist Profile Protection doesn’t necessarily remove AI-generated music from the platform altogether, it tightens the screws on the approval process and is a step towards preventing the increase of AI fraud.

The main difference between Spotify's approach and Apple Music's system is that, although Team Admins and Editors manage Artist Protection Profile, musicians still have a say in what music is released. And though it's technically an opt-in system, even if an artist enables the feature and never uses it, music still won't be released unless it gets approved, so there's an added layer of protection.

These factors aside, the system also aims to rebuild trust with listeners, allowing them to stream their favorite artists with confidence that the music is legitimate. Additionally, it ensures that only artist-approved music appears in your recommendations, such as Discover Weekly and Release Radar, which, as we've seen with a handful of viewers, is where most of the AI slop is occurring.