Australian schools have been told not to use generative artificial intelligence tools that sell student data from next year under a national framework developed to guide the sale and ethical use of the technology.

But the framework, developed by a taskforce of experts over more than six months and agreed to by education ministers in October, has also softened a principle aimed at vendors.

Among the six guiding principles in the framework that will come into effect when schools reopen for their first term in 2024, education ministers were particularly concerned about protecting student privacy.

A combined $1 million commitment has been made to Education Services Australia so it can update its privacy and security principles to protect student privacy and data where generative AI is used in schools.

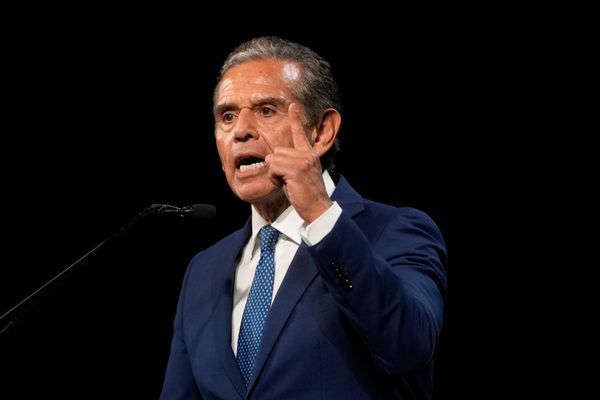

In a statement released on Friday, federal Education minister Jason Clare said that generative AI tools should only be used where it avoids unnecessary data collection, limits retention, prevents further distribution, and prohibits the sale of student data.

This is in addition to ensuring that “generative AI tools are used to respect and uphold privacy and data rights and comply with Australian law”, which was included in the draft framework released at the end of July.

The framework’s other guiding principles are teaching and learning, human and social wellbeing, transparency, fairness, accountability, and privacy, security and safety. It is expected to be reviewed at least every 12 months.

Overall, the final version of the document agreed to by ministers is broadly the same as the draft framework.

However, several guiding principles have been reworded and the number of guiding principles has increased from 22 to 25, all relating to ‘teaching and learning’.

The final version adds a guiding principle relating to the improvement of ‘teacher expertise’ and expands the statement on ‘human cognition’ into ‘critical thinking’, ‘learning design’, and ‘academic integrity’.

While the framework also includes a stronger language on the accountability of people using the generative AI tools, there is some softening of language around ‘explainability’.

The draft version of ‘explainability’ required that “generative AI tools are explainable, so that humans can understand the reasoning behind the AI model’s outputs or predictions, which includes understanding when algorithmic bias has influenced AI outputs”.

But the final version only requires that “vendors ensure that end users broadly understand the methods used by generative AI tools and their potential biases”.

The final framework comes a year after state governments rushed to implement temporary bans on the use of ChatGPT.

Development of a framework to guide the use of generative AI was agreed by education ministers in February and undertaken by the National AI in Schools Taskforce.

The taskforce included a consortium of representatives from the Commonwealth, state and territory governments, the Australian Education Research Organisation (AERO), the Australian Institute for Teaching and School Leadership (AITSL), and Education Services Australia.

Mr Clare said that the framework will help schools capitalise on the teaching and learning benefits of generative AI while mitigating privacy and safety risks they pose to school children.

“Importantly, the framework highlights that schools should not use generative AI products that sell student data,” Mr Clare said.

“We will continue to review the Framework to keep pace with developments in generative AI and changes in technology.

“If we get this right, generative AI can help personalise education and make learning more compelling and effective, and this framework will help teachers and school communities maximise the potential of this new technology.”

Federal and state education ministers agreed on the framework in October during an Education Ministers Meeting (EMM), but it was not released at the time. Mr Clare released the document on Friday.

A federal parliamentary committee is currently undertaking an inquiry into the use of generative AI in in the Australian education system following a referral from Mr Clare in May.

The inquiry has a particular focus on “ways in which [AI] can be used to improve education outcomes” and the “future impact generative AI tools will have on teaching and assessment practices”, according to the terms of reference.

A committee of the South Australian parliament also completed an inquiry into AI in November. South Australia is the only state to have never banned the use of generative AI tools in the classroom.

The South Australian government is trialing EdChat, a generative AI tool with “extra security features built into safeguard student privacy and data” in collaboration with Microsoft, according to Education, Training and Skills minister Blair Boyer.