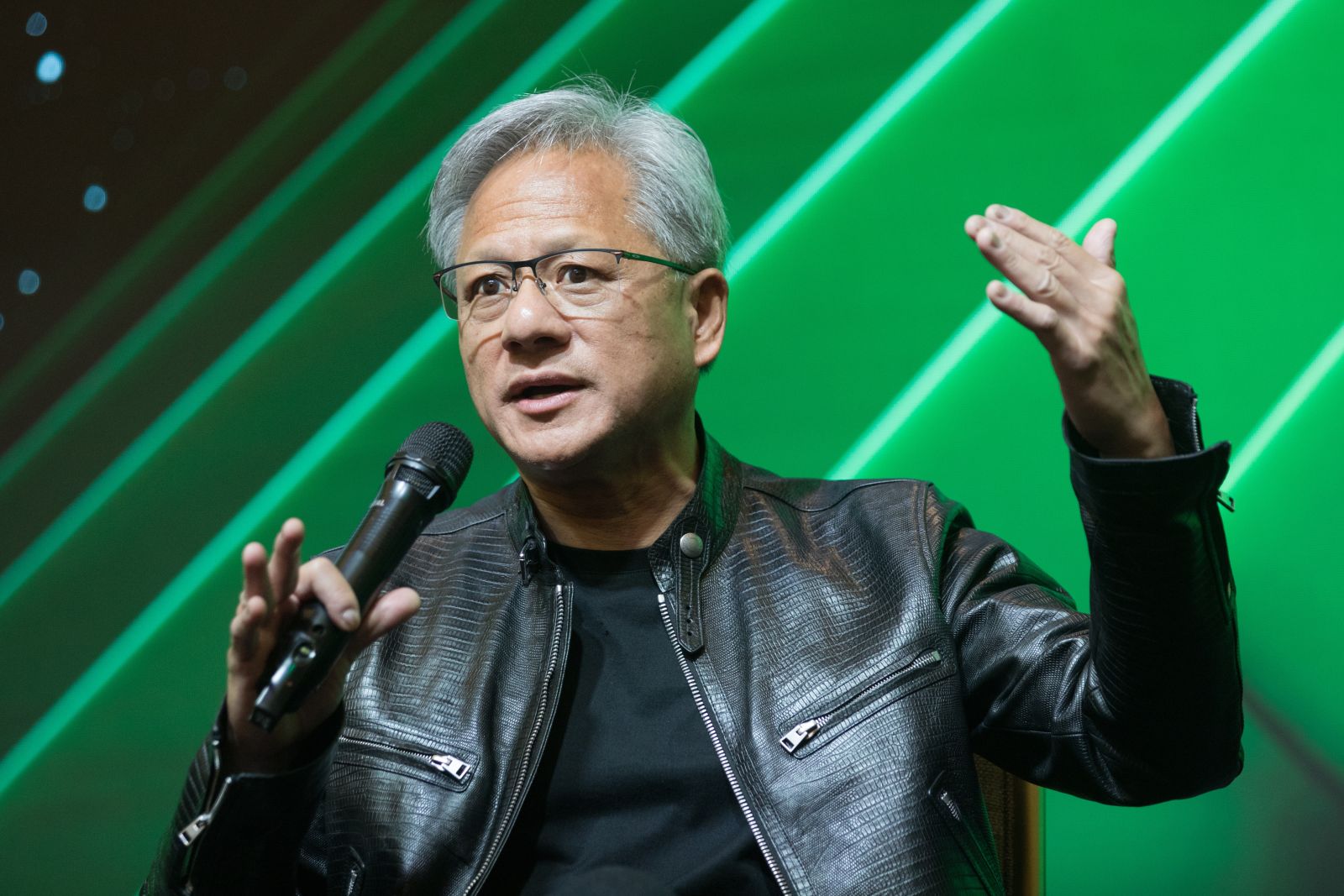

During NVIDIA’s (NVDA) recent Q2 2025 earnings call, CEO Jensen Huang commented on the sheer scale of the artificial intelligence (AI) revolution. Despite many of the world's biggest firms projecting astronomical levels of spending, Huang highlighted how projections are only continuing to grow — in some instances, by orders of magnitude higher than previously expected. The number could reach over a trillion dollars in short order, according to forecasts. In total, it’s said to average about $600 billion annually until at least the end of the decade.

Hyperscaler CapEx Surges to $600 Billion Annually

Responding to a question from Ben Reitzes, an analyst at Melius, Huang pointed out that just the top four hyperscalers — the largest global cloud providers — have doubled their collective capital expenditures in the past two years. Today, that spending has reached $600 billion annually, a figure Huang described as emblematic of the intensity of the “AI race.”

Huang explained, “As you know, the CapEx of just the top four hyperscalers has doubled in two years. As the AI revolution went into full steam, as the AI race is now on, the CapEx spend has doubled to $600 billion per year.” He continued, “There’s five years between now and the end of the decade, and six hundred billion dollars only represents the top four hyperscalers.”

Those hyperscalers are typically understood to include Amazon (AMZN), Alphabet (GOOG) (GOOGL), Meta (META), and Microsoft (MSFT), but Oracle (ORCL) is quickly making a name for itself in this space, as well.

Trillions in AI Infrastructure by the End of the Decade

Huang’s remarks came in response to Reitzes’ inquiry about NVIDIA’s estimates for $3–4 trillion in total data center infrastructure spend by 2030. That figure marks a sharp increase from earlier estimates of $1 trillion for compute by 2028, underscoring how quickly the market’s trajectory has shifted.

Huang emphasized that the $600 billion figure represents only the top hyperscalers, leaving out enterprise companies building on-premises, as well as a growing number of regional and global cloud service providers.

“United States represents about 60% of the world’s compute. Over time, artificial intelligence would reflect GDP scale and growth, and of course accelerate GDP growth,” Huang noted.

NVIDIA Positions as an AI Infrastructure Company

While NVIDIA is still widely associated with its pioneering role in developing the GPU, Huang stressed that the company has transformed into a full-scale AI infrastructure provider. Constructing a modern AI supercomputer requires six different types of chips, he explained, in addition to hundreds of thousands of GPU compute nodes deployed at gigawatt-scale data centers.

“We’re really an AI infrastructure company, and we’re hoping to continue to contribute to growing this industry, making AI more useful, and very importantly, driving the performance per watt,” Huang said.

This focus on efficiency is critical, as Huang acknowledged that power availability will be a major constraint in scaling AI infrastructure. NVIDIA’s ability to deliver greater performance per unit of energy and per dollar spent, he argued, directly enhances the economics of these massive “AI factories.”

A Sensible $3–4 Trillion Path

Despite the eye-popping numbers, Huang described the estimate of $3–4 trillion in data center investments over the next five years as “fairly sensible.” The logic rests on both the accelerating demand for AI workloads and the growing economic role of artificial intelligence.

For NVIDIA, which already commands a dominant share of AI compute infrastructure, the implication is clear: the company’s share of that spending will remain significant, further cementing its role as the backbone of the AI economy.

On the date of publication, Caleb Naysmith did not have (either directly or indirectly) positions in any of the securities mentioned in this article. All information and data in this article is solely for informational purposes. For more information please view the Barchart Disclosure Policy here.