A few weeks ago, researchers in Singapore announced that they found a way to make protein music sound better by using classical music as its building blocks. But why is biochemical protein information even turned into music to begin with? And why does it matter if it sounds good?

The act of converting research data into sound is called “sonification”, and it has been surprisingly useful across different fields of science. One of the most well-known applications is the Geiger counter, which uses tones to indicate the level of radiation in the area. Hearing the beep of a Geiger counter makes it a lot easier to detect a sudden local change in radioactivity than looking at a display does.

Our ears are able to distinguish very small changes in patterns that are harder to spot by eye, which means that people listening to sonified data can pick up entirely new information. Sound is already being used in this way to analyse astronomy data sets, which are usually large and complex so it’s hard to spot patterns. But turning them into sound makes it easier to pick up on interesting information.

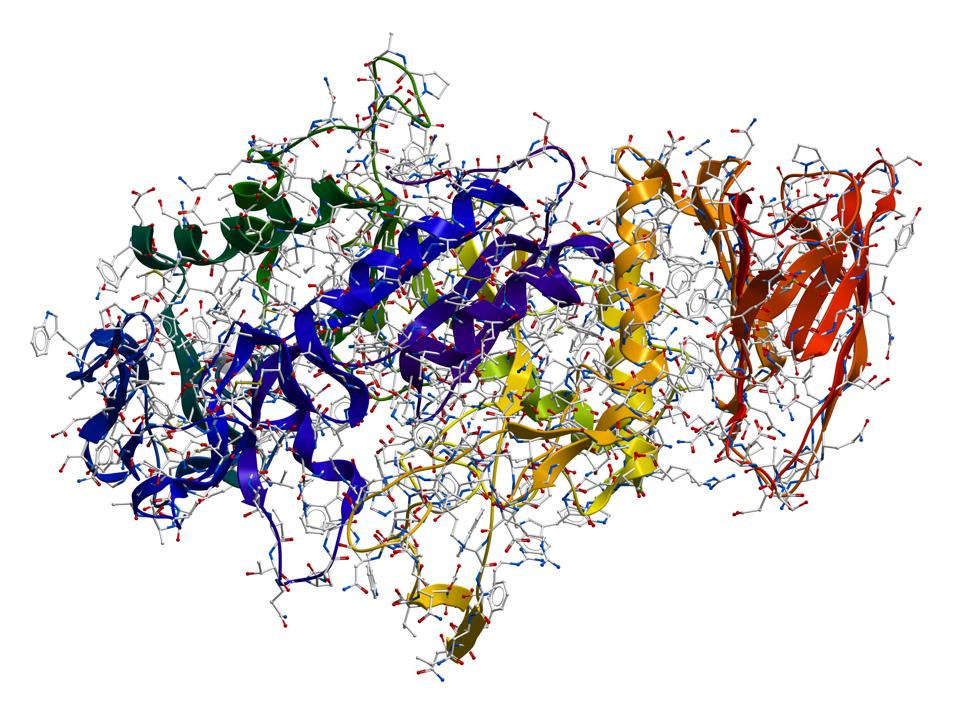

Proteins also contain large amounts of information that are difficult to assess by eye. They are the end product of genetic information: each protein is encoded by one gene and carries out the function of that gene. Much like genes, proteins can be interpreted as code. They’re usually shown as strings of letters or visualised as images of 3D structures, but the information can also be turned into music.

Proteins are large and complex molecules, though, so just from looking at visual representations of their structure a very small change in shape isn’t noticeable, particularly because proteins are always a little bit “wiggly”. But spotting an unusual pattern could be easier when listening to it.

That’s one of the reasons for converting protein information to sound. Scientists can use different aspects of sound (pitch, volume, timbre) to convey the different biologically relevant features of proteins, such as the amino acid sequence as well as the local and overall shapes of the molecule. The step to music is easily made from there, so over the years many researchers have created their own interpretations of protein music.

But because proteins aren’t composers, the resulting music often sounds chaotic and is difficult to listen to. That’s why Yu Zong Chen and colleagues tried to find a new way to convert protein information into sound, by using classical music. They first analysed a style of classical music to determine how real music made use of pitch, length, octaves, chords, dynamics and main theme. Then they created an algorithm from this information that mapped features of proteins to the types of musical features they found in real music. The result was something that sounded a lot more musical than randomly generated protein songs

Still, why does it matter whether the music sounds good or not? There are a few different reasons why you might want protein music to be closer to a recognizable music genre.

First, if protein music is being used to listen for changes in patterns, then it’s easier to do this when the music is more palatable, or even enjoyable. But protein music can also be used as a way to share research with a wider audience. Protein music can serve as a talking point at festivals for example. In that case, the music is more likely to attract an audience if it sounds closer to “real music” than randomly generated tones. Data-as-music can also be used to share complex information with people with impaired sight. Biochemistry relies a lot on visuals, and sound interpretations of protein structures would allow people to get the same sense of complexity and patterns without seeing the images.

Finally, protein music isn’t only for human ears. It can be used as AI input to analyse features and patterns of proteins, or even as a way to create new proteins. But to be fair, the AI won’t care if music sounds good or not. That’s only a benefit for us humans.