Social media is currently a graveyard of "Expert Prompt" cheat sheets. Neatly designed carousels on LinkedIn and Instagram promise to turn ChatGPT into everything from a Wall Street analyst to a tax strategist. But do they actually work? Or is "Role Prompting" just a placebo for better AI performance?

I spent some time stress-testing five of the most viral frameworks. I moved past the hype to see which ones produced actual value — and which ones were just hallucination traps. Some genuinely improved the responses. Others… not so much.

Here’s what happened.

Expert educator |

A- |

Incredible for structure and roadmaps. |

Social strategist |

A |

A genuine game-changer for creators. |

Competitive analyst |

B+ |

Fast, but lacks "fresh" secret data. |

Financial analyst |

B- |

Good for "Bull vs. Bear" logic; bad for math. |

Tax strategist |

C |

Dangerous. Don't trust it with the IRS. |

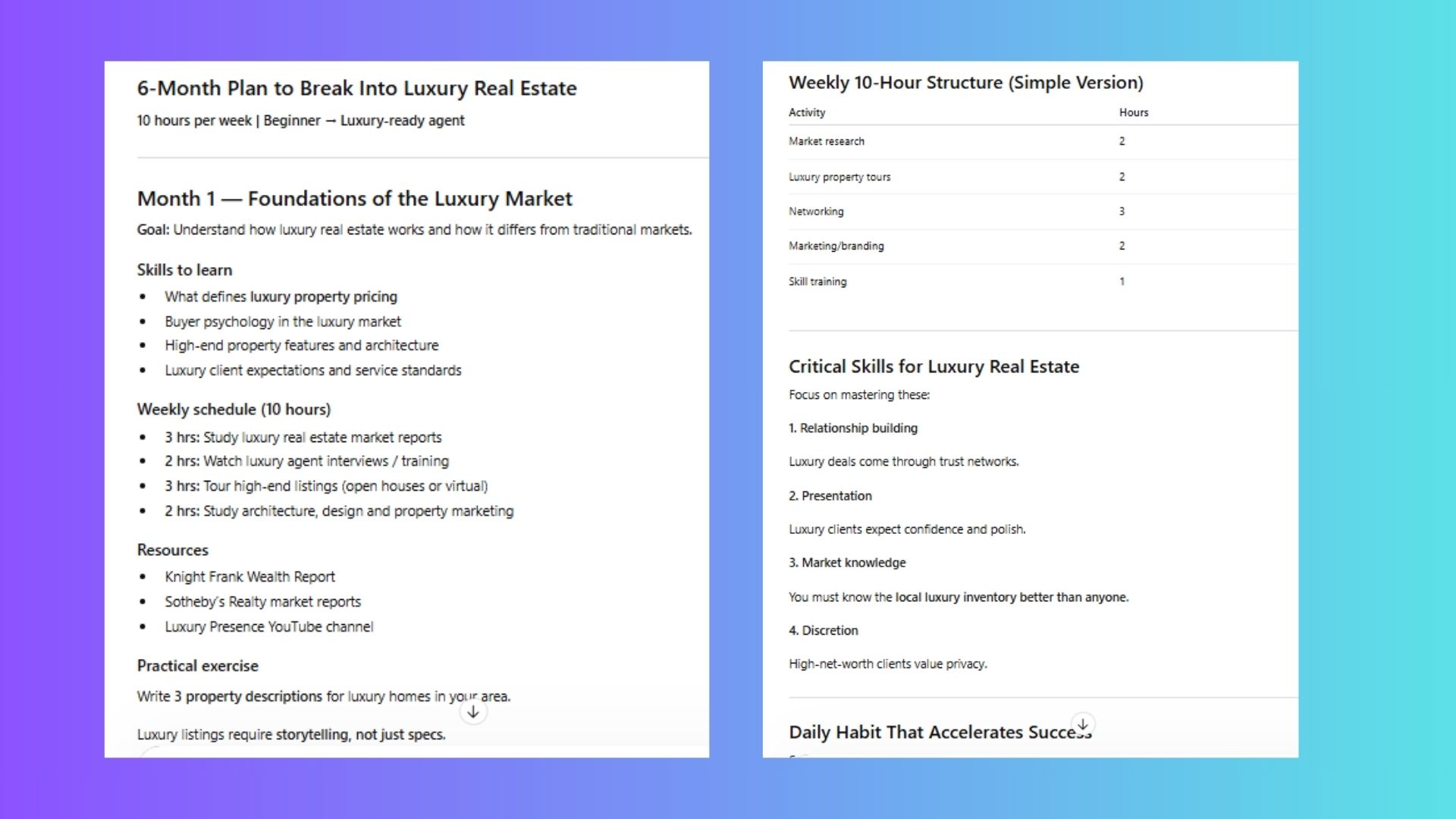

1. The 'Expert Educator'

The Claim: It creates a bespoke curriculum better than a $500 online course.

The Prompt: “You are an expert educator specializing in [Subject]. Create a 6-month personalized learning plan for [Skill]. My goal is [Goal]. I have [X] hours per week.”

The Test: I asked it to teach me the luxury real estate ropes (inspired by Owning Manhattan). My goal? Sell a $2M home in a year with 10 hours of study a week.

The Result: Surprisingly deep. It didn’t just give me a list of books; it built a week-by-week curriculum, suggested practical "field projects," and identified the exact licensing requirements for my area.

Why it works: Adding experience level and time constraints forces the AI to stop being generic and start being realistic.

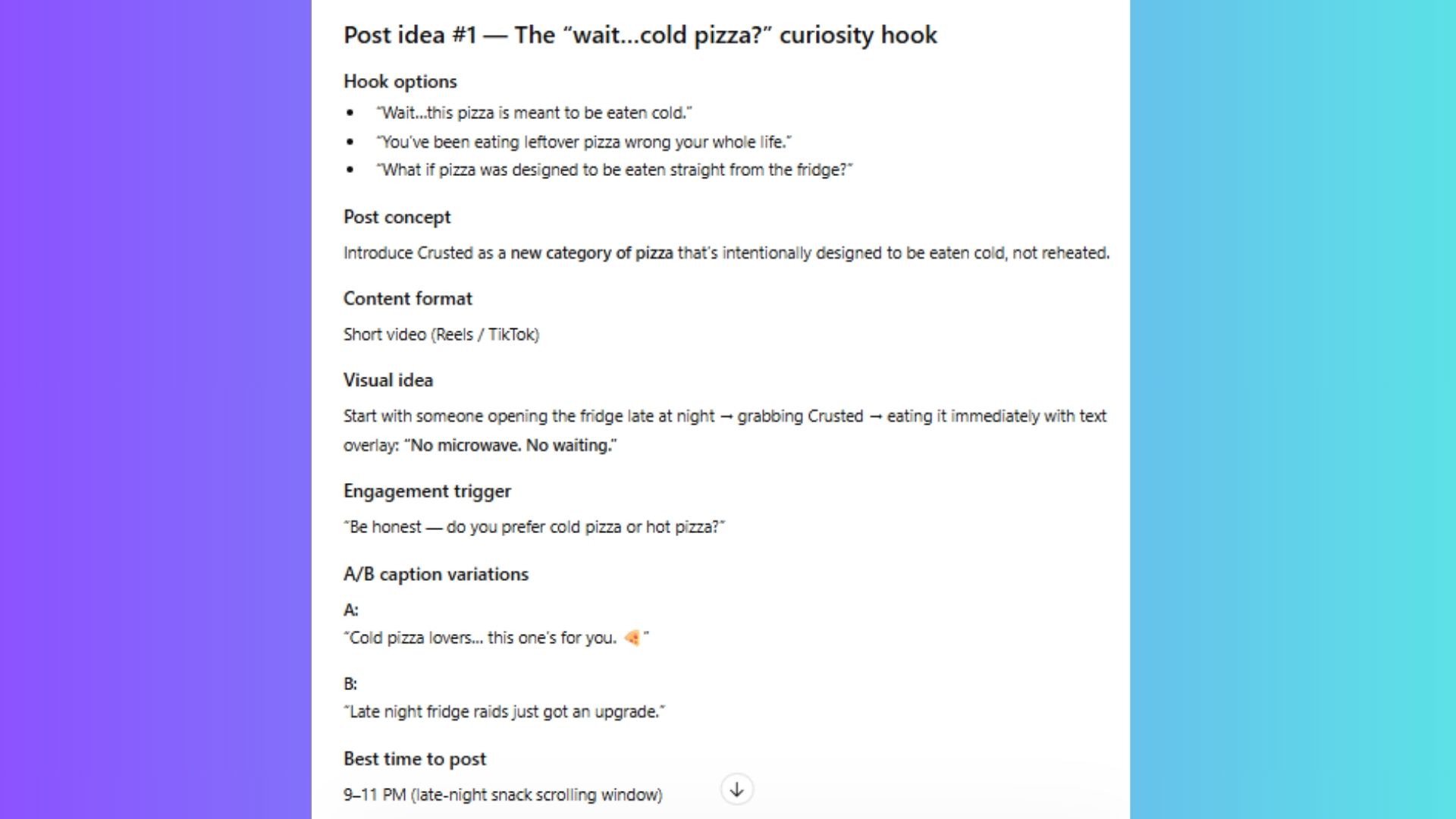

2. The 'Social Media Strategist'

The Claim: It replaces a full social media team.

The Prompt: “Act as a viral strategist. Generate 5 post ideas about [Topic]. Include 3 scroll-stopping hooks, visual concepts, and A/B caption variations for each.”

The Test: I used it for my fake startup: Crusted (ready-to-eat cold pizza).

The Result: This was the clear winner. Instead of "Write a post about pizza," it gave me carousel outlines, "scroll-stopper" hooks like "Why hot pizza is holding your productivity back," and specific engagement triggers.

Pro Tip: This is perfect for feeding into video tools like Sora or Veo.

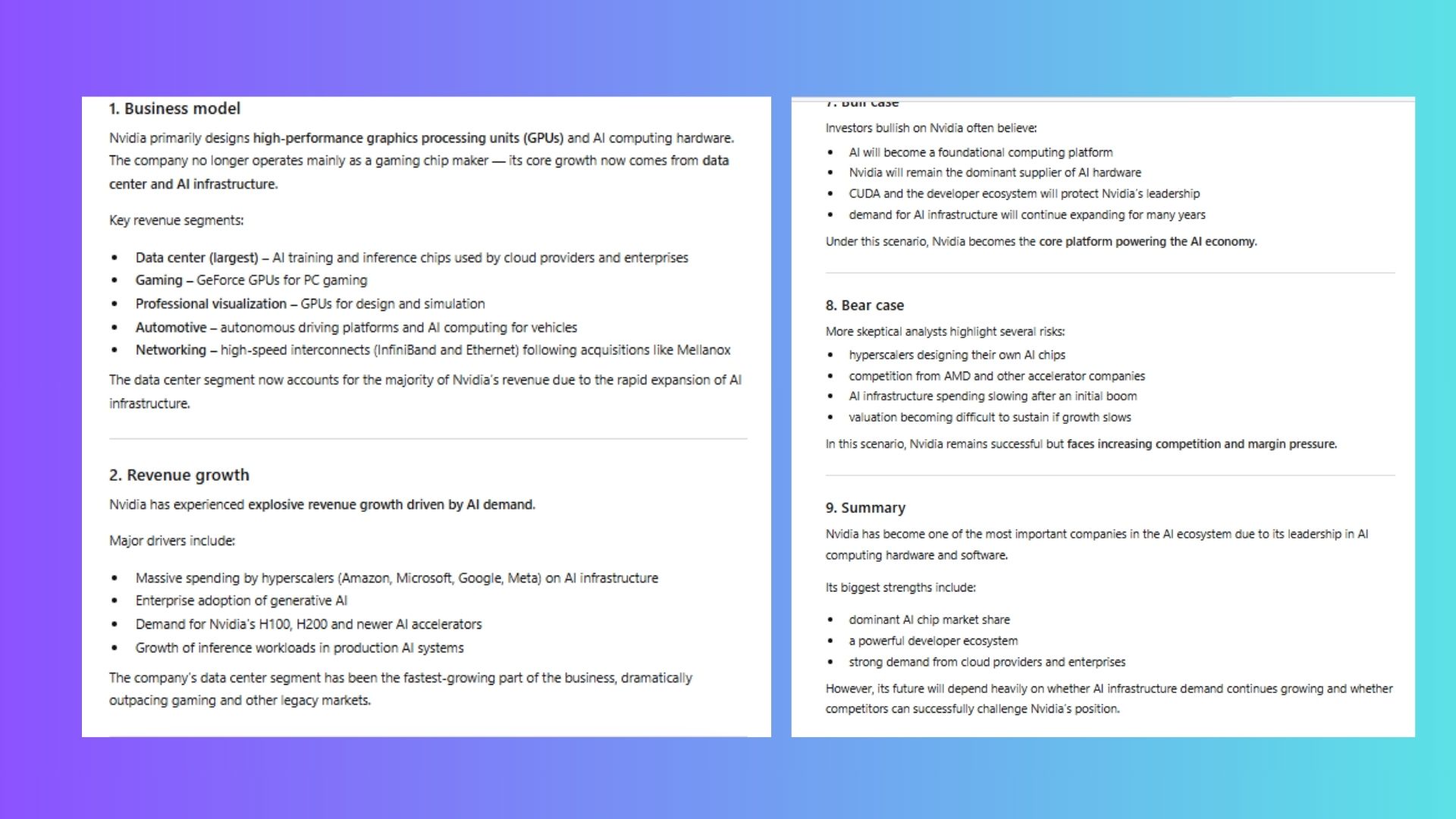

3. The 'Financial Analyst'

The Claim: Wall Street-level equity research in seconds.

The Prompt: “Act as a professional equity research analyst. Evaluate [Company] using: Business Model, Moat, Valuation Metrics, and Bull/Bear cases.”

The Test: I ran a deep dive on Nvidia since it's among the most popular at the moment.

The Result: It generated a professional-looking "Research Note." The Bull/Bear cases were logically sound, but here’s the catch: AI is still bad at real-time math. It can explain why a stock might go up, but don't trust its P/E ratio calculations without double-checking.

Verdict: Great for summarizing sentiment; terrible for actual accounting.

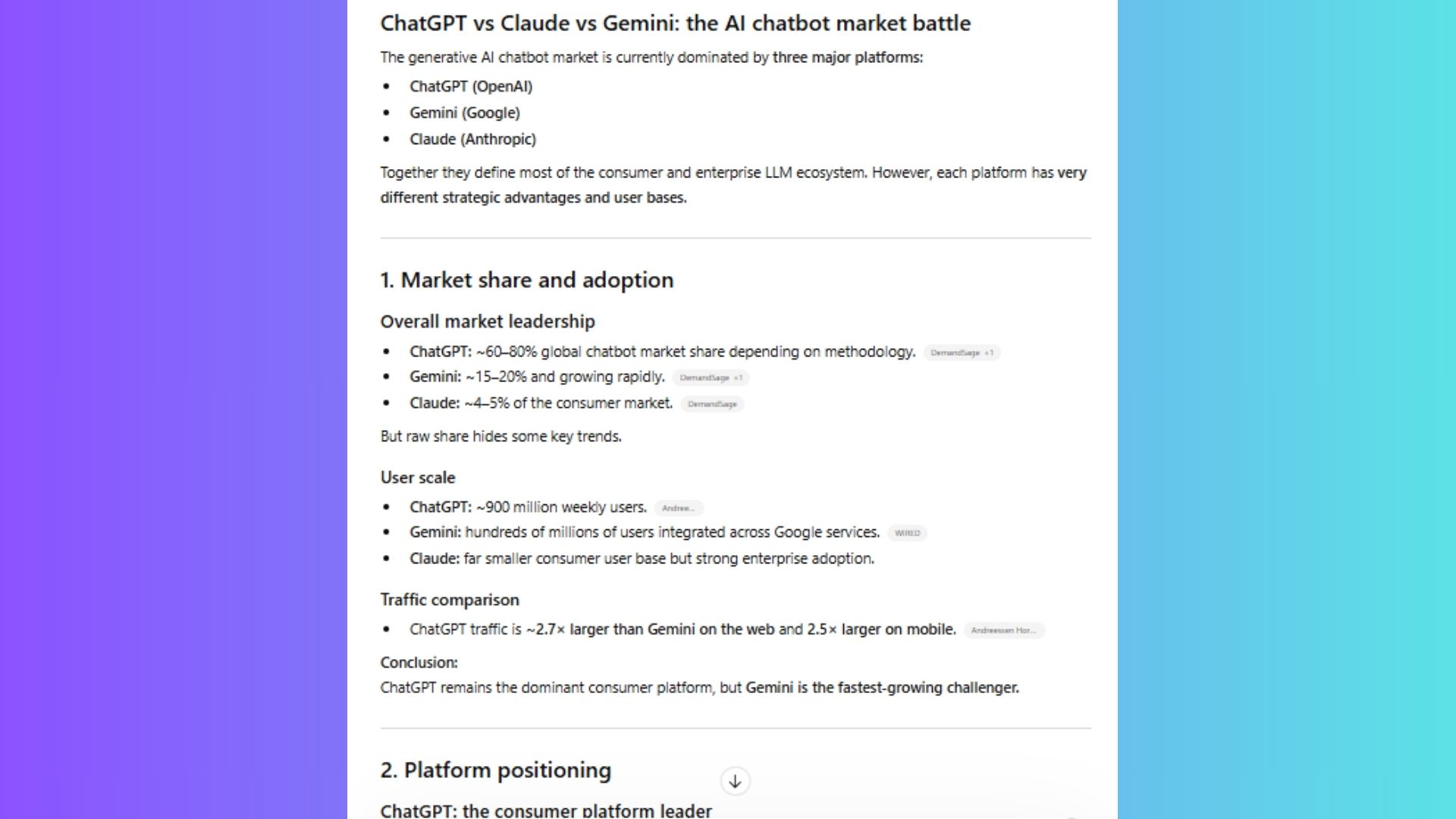

4. The 'Competitive Intelligence' prompt

The Claim: Instant SWOT analysis of any market.

The Prompt: “Analyze [Company A] vs [Company B] across: Product features, Pricing, Target Customer, and Marketing messaging.”

The Test: ChatGPT vs. Claude vs. Gemini.

The Result: It shifted into a "Reasoning Mode" that pulled out specific positioning differences I hadn't considered. It identified Claude’s "creative writing" edge vs. Gemini’s "ecosystem" edge instantly.

Verdict: It's a massive time-saver for first-round market research, but definitely not a replacement for a professional any time soon.

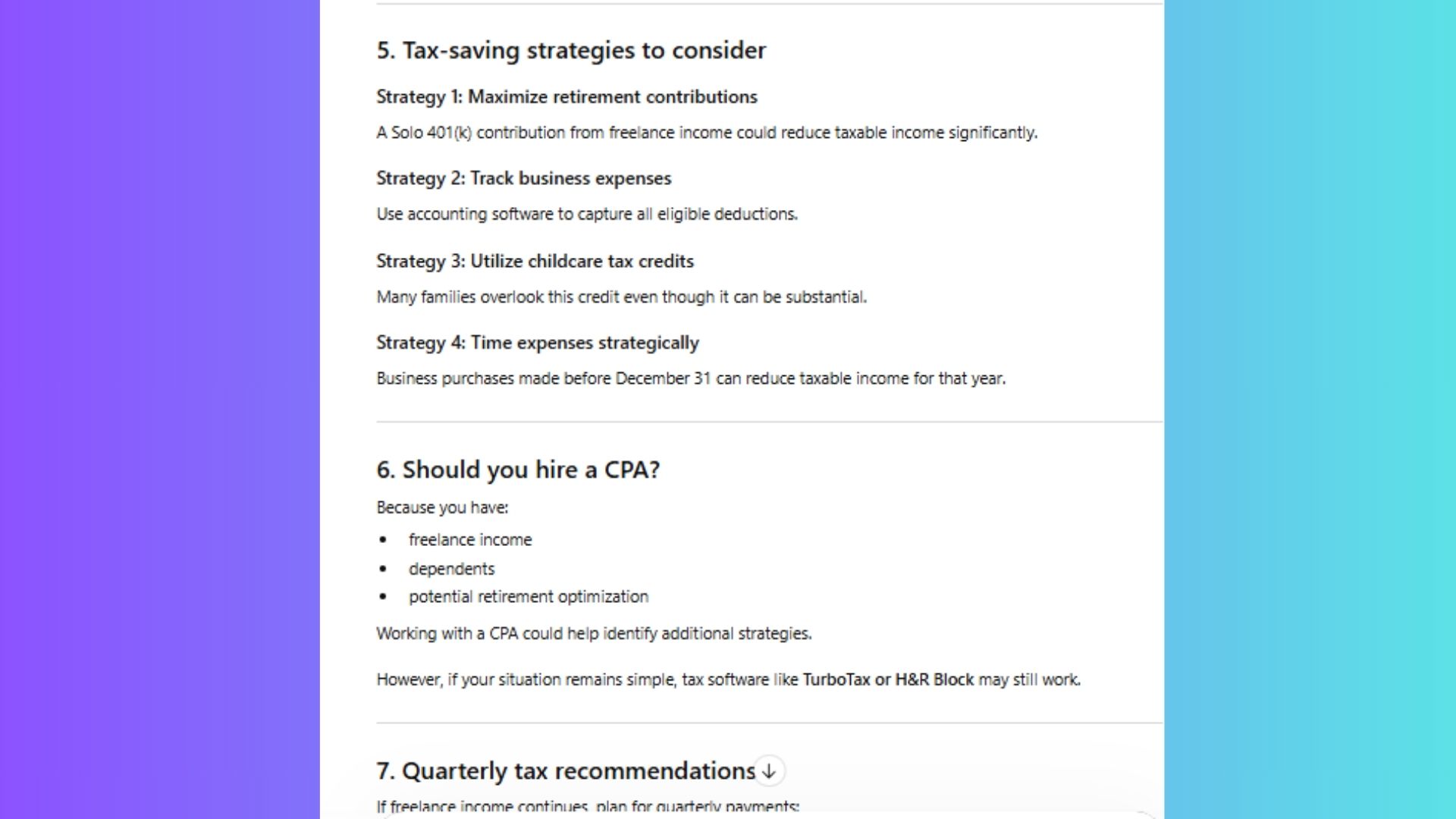

5. The 'Tax Strategist'

The Claim: Maximize deductions and "minimize liability."

The Prompt: “You are a tax strategist and CPA. Help me maximize deductions for [Tax Year] based on [Income/State].”

The Test: A standard 1099 independent contractor scenario.

The Result: This is where the "Expert" façade crumbles. While it provided a decent checklist, it missed specific state-level nuances and recent 2024/2025 law changes. This is a huge red flag and frankly, very dangerous. In a viral world, this prompt is "helpful." In the real world, it’s a liability.

Verdict: It might be helpful for brainstorming, but never filing. Do not fire your accountant. AI should never take the place of a human professional.

Final thoughts

After running these tests, the reality is clear: "Expert Prompts" do not magically increase the AI’s IQ. ChatGPT doesn't suddenly "become" a CPA or a Wall Street titan just because you told it to.

However, these frameworks are highly effective for one reason: They solve the "Vagueness Trap." When you give a basic prompt, the AI aimlessly scans its entire database for a generic average. When you use an "Expert Framework," you are essentially giving the AI a GPS coordinate. You aren’t making it smarter; you’re making it focused.

The frameworks that actually work treat AI like a highly capable assistant. But it shouldn’t be treated as an expert or a replacement for a human professional, especially when the stakes are high — like financial or health decisions. The takeaway here is this: AI works best when it augments human judgment, not replaces it.