For nearly three decades, my mornings have started the same way: running shoes on, out the door before sunrise, miles logged before most people finish their first cup of coffee. But the hardest part of staying fit was never the run, it was everything that came after.

If you’ve ever tried to lose weight, train for a race or simply eat healthier, you know the routine: scan barcodes, log ingredients, weigh portions, repeat. What starts as motivation quickly turns into tedious admin, and the burnout is real.

That’s why the idea of using AI glasses to track calories for me instantly caught my attention. If they could remove the most annoying part of healthy eating, I wanted to know.

But with Meta’s April 2026 update, the frustration just vanished for me. By integrating the Muse Spark multimodal model, my Ray Ban Meta Smart Display glasses have once again proven useful — even beyond translating the Superbowl Half Time show or shopping at Target.

Here’s what it’s actually like to let your eyewear audit your plate.

Less counting, more enjoyment

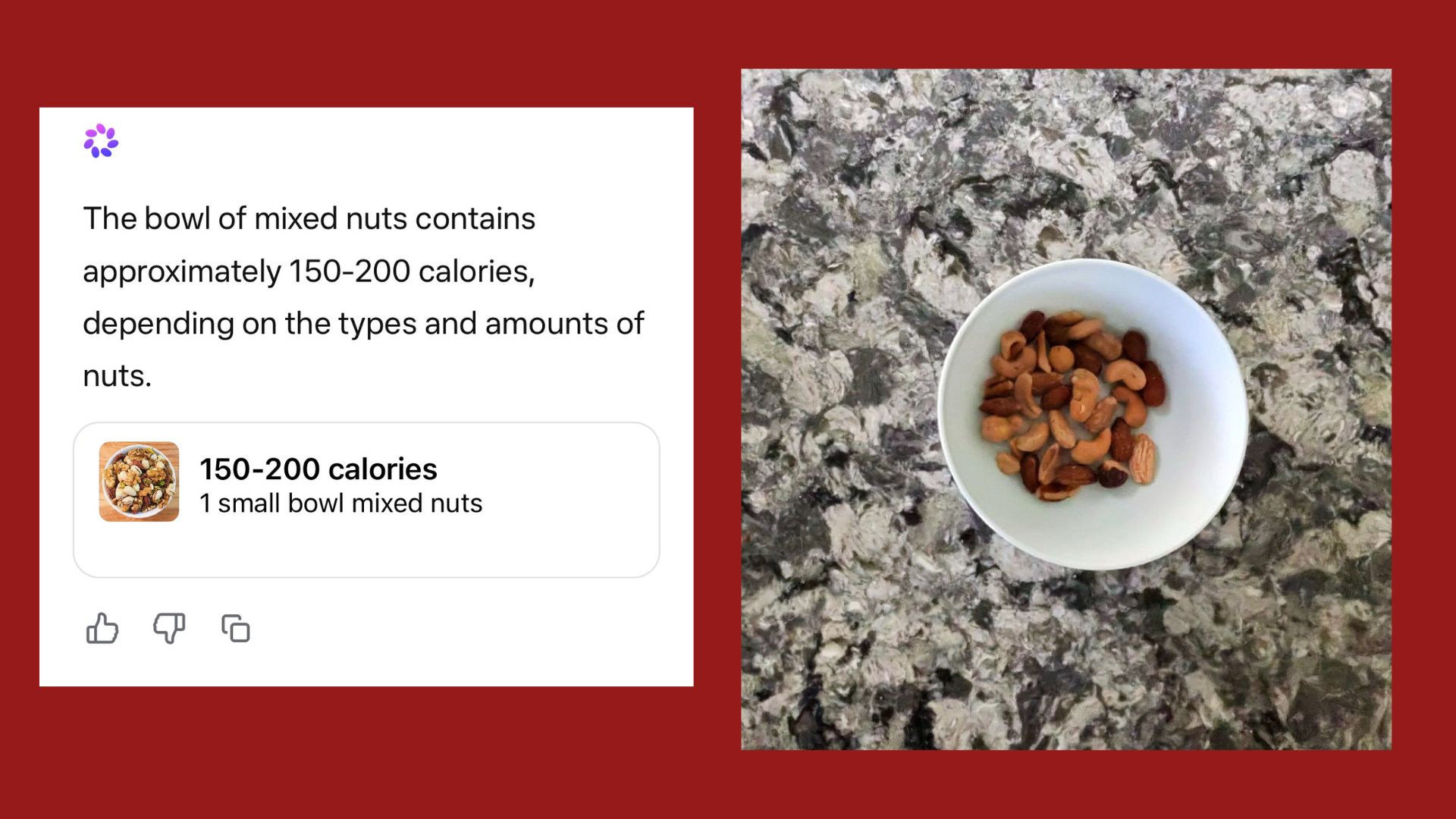

Standing in my kitchen with a pre-run snack, usually involves a "stop and scan" moment. Now, I simply put on my Ray Ban Meta smart display glasses and ask:

"Hey Meta, tell me approximately the calories in what I’m eating,"

Through the display, I saw the glasses instantly outline the banana and a handful of almonds using Muse Spark’s multimodal segmentation. A small overlay appeared in my line of sight: 105 calories for the banana, 160 for the almonds.

The glasses estimated the calories far more accurately than a standard flat photo ever could. I now do this throughout the day with whatever I eat, whether it's a salad, apple or even a cheeseburger.

Beyond the label

The real test happened at the local coffee shop. As I looked at my cup, the glasses identified the "Starbucks" logo and the size of the container. Because I had previously synced my preferences in the Meta View app, it knew I opted for oat milk.

It estimated the sugar content and I was able to immediately add it to my food log before I even took the first sip. This is what I think is one of the coolest features of AI. It's essentially data that quietly exists in the periphery of your life, but AI has the ability to spot patterns and provide details based on its training data.

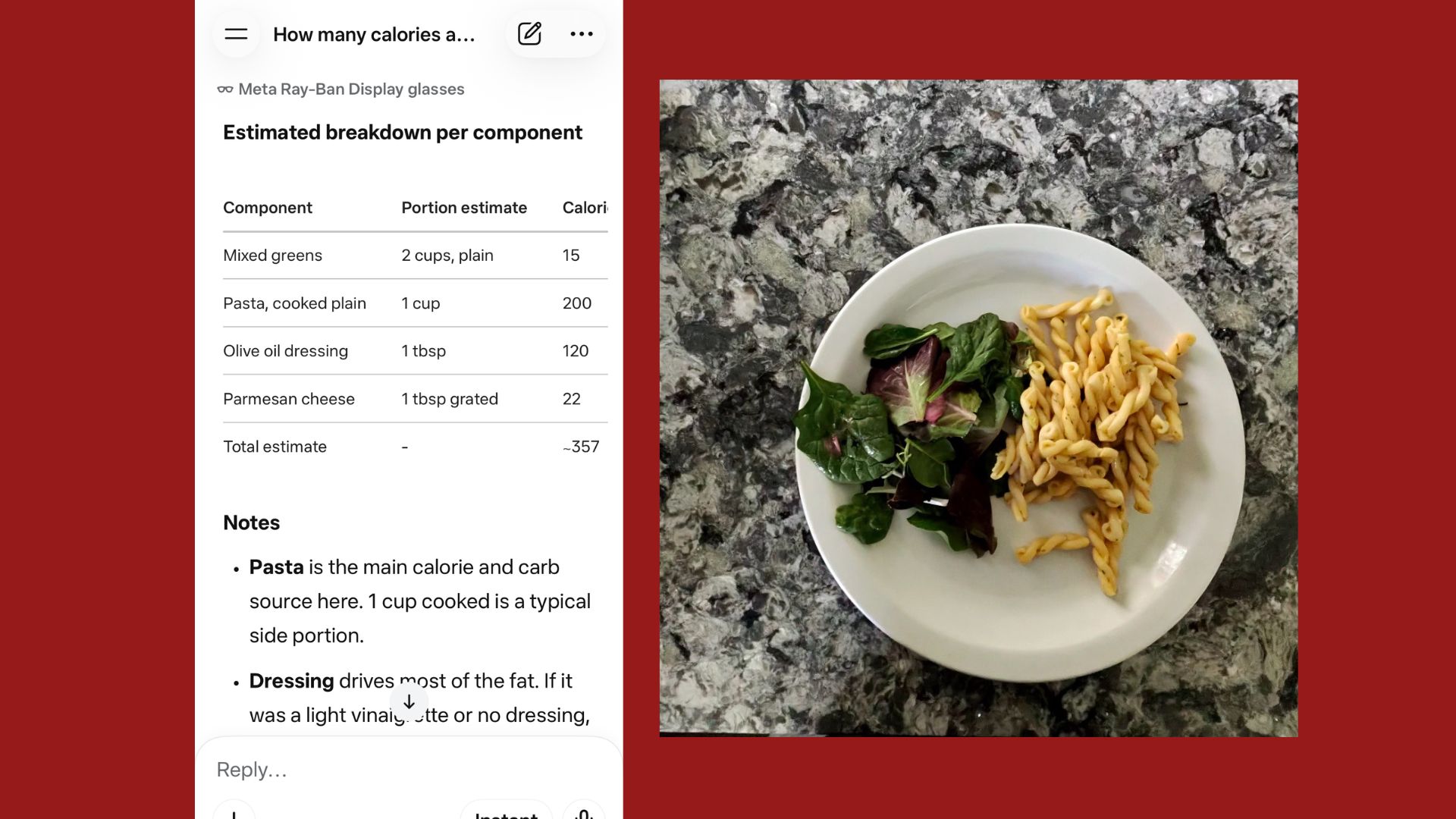

Can AI really 'see' a recipe?

Of course, this experiment wouldn't be a true test without an accuracy challenge. It's not hard to identify a whole apple with fairly accurate data. However, it's much harder to identify a home-cooked chili. To stress-test the model, I used a "context hack." While sautéing onions, I looked at the pan and said, "Meta, I’m adding two tablespoons of olive oil and a pound of lean ground turkey."

Later, when I sat down to eat, the glasses didn’t just see "chili." It utilized recall to remember the ingredients from thirty minutes prior. This local processing solves the biggest hurdle in AI nutrition for me — where the hidden fats and sugars exist but a camera alone can’t see. Although the numbers are estimates, for me, that's better than not knowing at all (or trying to manually do the math myself — not my strong suit).

Social stealth with the Neural Band

I have to say that the most futuristic moment happened at a recent party. While I don't usually wear the Meta display glasses around people (talking to my glasses while your friends are passing the salad is a social non-starter), the Meta Neural Band changed the game.

When a notification appeared in my view asking to confirm a "Large Garden Salad," I used a subtle "pinch" gesture with my hand under the table. The band’s sensors picked up the motor intent and confirmed the image. It was the first time calorie tracking felt truly invisible.

Bottom line

Using Ray-Ban Meta glasses to help me eat healthier was not something I had on my 2026 bingo card. But after trying it firsthand, it feels like an early glimpse at how AI could reshape personal wellness.

With tools like Muse Spark and the Ray-Ban Meta Display, we may be moving toward a future of ambient health tracking, where nutrition is monitored with the same low-effort convenience as steps, sleep or heart rate. Instead of manually logging every bite, the process starts to happen in the background.

The calorie estimates are still approximate, but for me, that’s enough. As a runner, it could mean the end of post-workout data-entry burnout after a long training run. As a parent, it offers one less mental task while trying to keep an eye on how much sugar my kids consume. And as someone who covers AI for a living, it feels like one of the clearest real-world examples yet of wearable AI becoming genuinely useful.

I’m still experimenting, but one thing is clear: smart glasses are starting to do a lot more than just take photos.

More from Tom's Guide

- ChatGPT’s new ‘Thinking’ mode just hit a 94% reasoning score — 7 prompts it can solve that standard AI can’t

- I tested Anthropic’s new Claude Opus 4.7 — and it’s the first AI that actually ‘reasons’ through tasks

- Google just unlocked 'Agent Mode' for Gemini 3.1 — here are 7 things it can now do for you