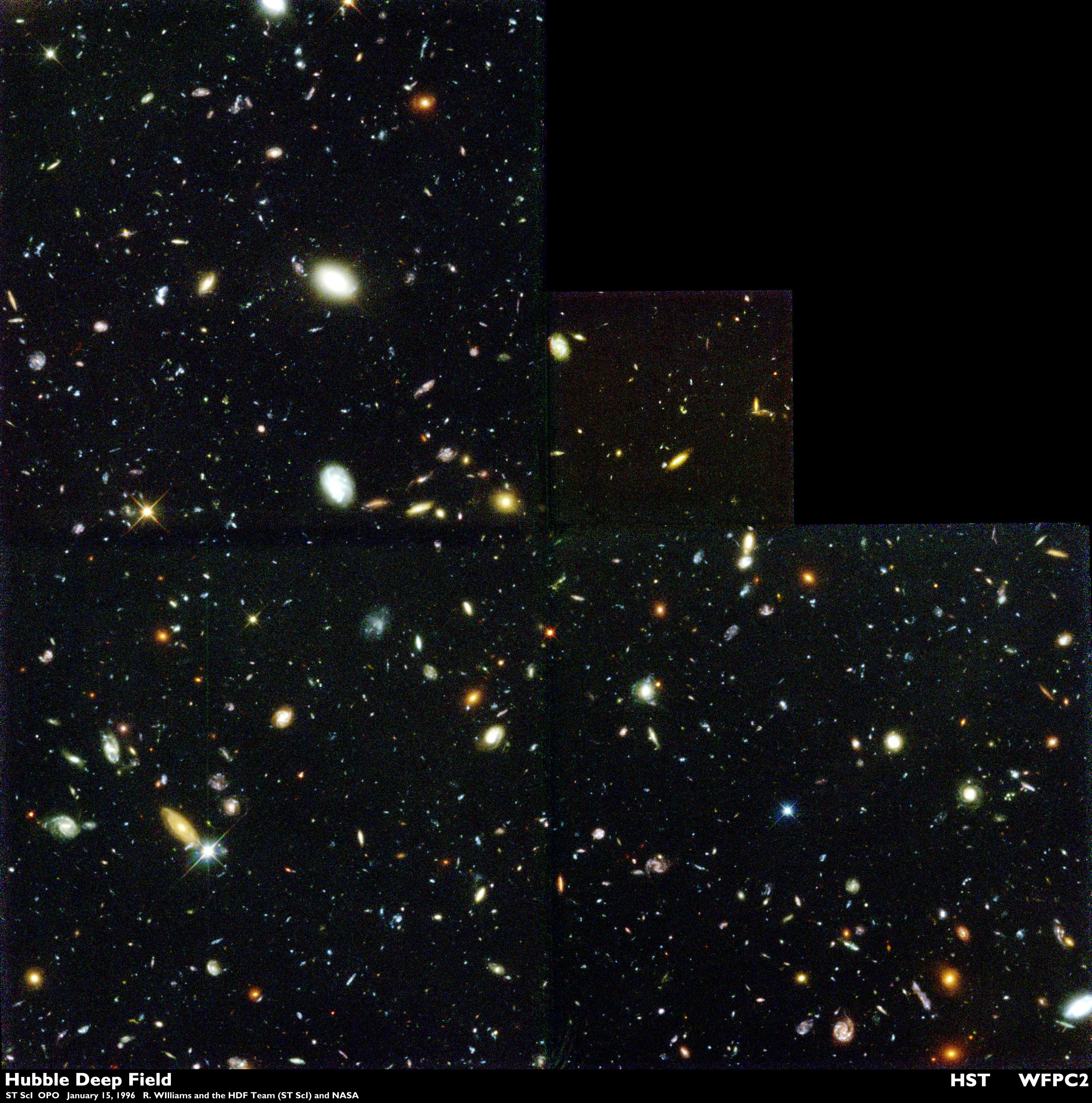

In December 1995, the Hubble Space Telescope spent 10 consecutive days staring at one small region of the sky.

With more than 100 hours of exposure time and 342 separate exposures, the telescope captured one of its most iconic and important images: a deep-space image that revealed almost 3,000 ancient galaxies dating back to the very early universe.

The Hubble Deep Field North was a major feat in deep space photography. And since then, things have only gotten better.

With the recent launch of the James Webb Space Telescope (JWST), astronomers will be able to peer into hidden regions of space. JWST is engineered to detect light outside of the visible range, producing images of the faintest and most distant objects. But this presents its own challenges: How do you represent what the human eye can’t see? How do you turn several snapshots into a cohesive photo?

As we anticipate the release of JWST’s first images this summer, Inverse spoke with Jonathan McDowell, an astrophysicist at the Center for Astrophysics and the Chandra X-ray Center who has worked extensively on the Chandra X-ray Observatory mission.

Chandra, launched in 1999, looks at the cosmos in X-ray, which is far from what our eyes can see, but it’s where things like black holes and other hyper-energetic objects shine most brightly. Like Chandra, JWST will be looking at the cosmos in wavelengths outside of what the human eye can observe. In JWST’s case, it’s infrared, which shows hot objects. Though the two wavelengths are far away, they present similar challenges in taking that data and making a scientifically useful image — and one that can be processed to present to the public.

McDowell helped us break down how a space image is captured, developed, and processed to produce the awe-inspiring result that we see.

Step 1: Pointing the telescope

The first celestial object to ever be photographed was the Moon.

In 1840, British physicist John William Draper captured the surface of the Moon from his rooftop observatory at New York University. The Moon is a mere 238,855 miles away from Earth. But today, space telescopes are able to capture images of objects located millions of light years away.

“The first thing you've got to do is point the telescope in the right direction,” McDowell tells Inverse.

That alone can be tricky since space telescopes follow a certain orbit, traveling at a high speed. In order to figure out which direction to point at, astronomers first have to figure out where they currently are.

There are small auxiliary telescopes that snap photos of stars, and using that information of where familiar stars are, they can then know which direction in the sky they are currently pointing at.

Then using coordinates of the target object, astronomers will point the telescope in its direction.

Step 2: Calibration

Before the telescope snaps the image, a lot of time is spent calibrating. McDowell, who has worked on the Chandra X-ray Observatory imaging some of the most energetic phenomena in the universe, says that when calibrating a camera, there are some important baselines.

“We spend sometimes half our time taking pictures of things we already know about,” McDowell says. “We'll take pictures to check the sensitivity of the camera, make sure that the geometry of the camera is right, or take a picture of the star cluster where you know how far apart the stars are and that tells you the scale of your picture.”

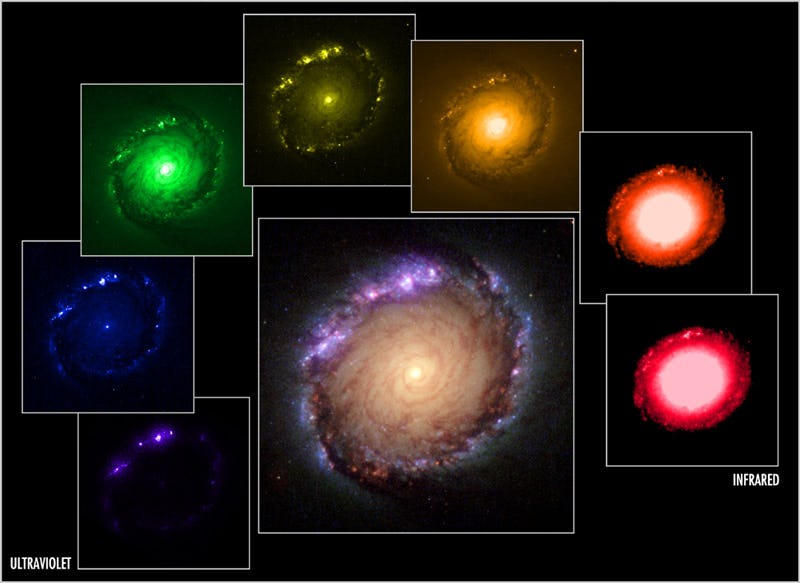

Different wavelengths allow scientists to see different parts of the universe, revealing the intricate details of hot gas traveling from a supernova explosion.

If telescopes only saw the cosmos in visible light, that light has short wavelengths which means it is more likely to bounce off of surrounding particles and scatter. But when observing the universe in infrared light, longer wavelengths make their way through gas and dust more effectively and allow astronomers to look further out into the cosmos.

Step 3: Snap!

After the telescope is properly aimed in the right direction, light falls into the telescope and onto the camera. The camera technology of the telescope is similar to the one found in our phones or in digital cameras, according to McDowell.

“The light falls on the camera, and if the light is redder, then it has more energy,” McDowell says. “And you take separate red, green and blue images and then put them together to make a color image.”

Rather than use a traditional camera for this, Hubble records incoming photons of light through a charge-coupled device (CCD).

CCD doesn’t measure the color of the incoming light, but the telescope has filters that can be applied to let in only a specific wavelength range, or color, of light. Hubble will then take images of the same object through different filters, which will be combined together to create one comprehensive image.

Step 4: Edit

To get a space picture ready for the general public, astronomers have to do a little processing. Most objects in space emit colors that are too faint for the human eye to see. Sometimes, scientists are forced to assign colors to filters that cannot be seen with the human eye.

For the Hubble image of the Cat Eye Nebula, scientists assigned red, blue, and green to radiation from hydrogen atoms, oxygen atoms, and nitrogen ions — none of which show up in visible spectra. To our eyes, the difference between the three types of radiation was three narrow wavelengths of red that would not be distinguishable to the human eye.

Step 5: Give it context

A space image without any data is just a picture. But scientists use these images to gather data on cosmic objects.

“So now you've got a frame which is just a picture, but with no context,” McDowell says. “You have to apply where was the spacecraft pointing, what is the scale of the spacecraft, what corrections do you have to make to the data sensitivity depending on perhaps today the camera is point one degrees cooler than it was yesterday.”

This is done in order to provide context to the image that you see.

“All of that contextual information has to be applied to give you a scientifically useful image rather than any picture,” McDowell says.

“It’s not just a pretty picture,” he adds. “It’s a pretty picture that you can measure numbers off of.”