Earlier this week, during the Android Show, Google announced the company is rolling out a feature called “Contextual suggestions” that allows Android devices to proactively recommend actions based on your habits, routines and real-world behavior.

Powered by Google’s broader Gemini Intelligence system, the feature could do things like suggest your workout playlist when you arrive at the gym or prompt you to cast a sports game around your usual viewing time.

Google appears to be transforming Android from an operating system you control manually into one that increasingly anticipates what you want before you ask. Essentially making this one of the biggest shifts in smartphone computing since the rise of voice assistants.

Android is becoming proactive instead of reactive

According to a new report from The Verge, Google’s newer Android AI features suggest something different is happening now. Instead of waiting for instructions, Android may increasingly do things such as predict your next action and surface relevant tools automatically. It might start to understand your routines and habits, then suggest suggest actions across apps.

That’s a major shift from “smartphone” to something much closer to an ambient AI system.

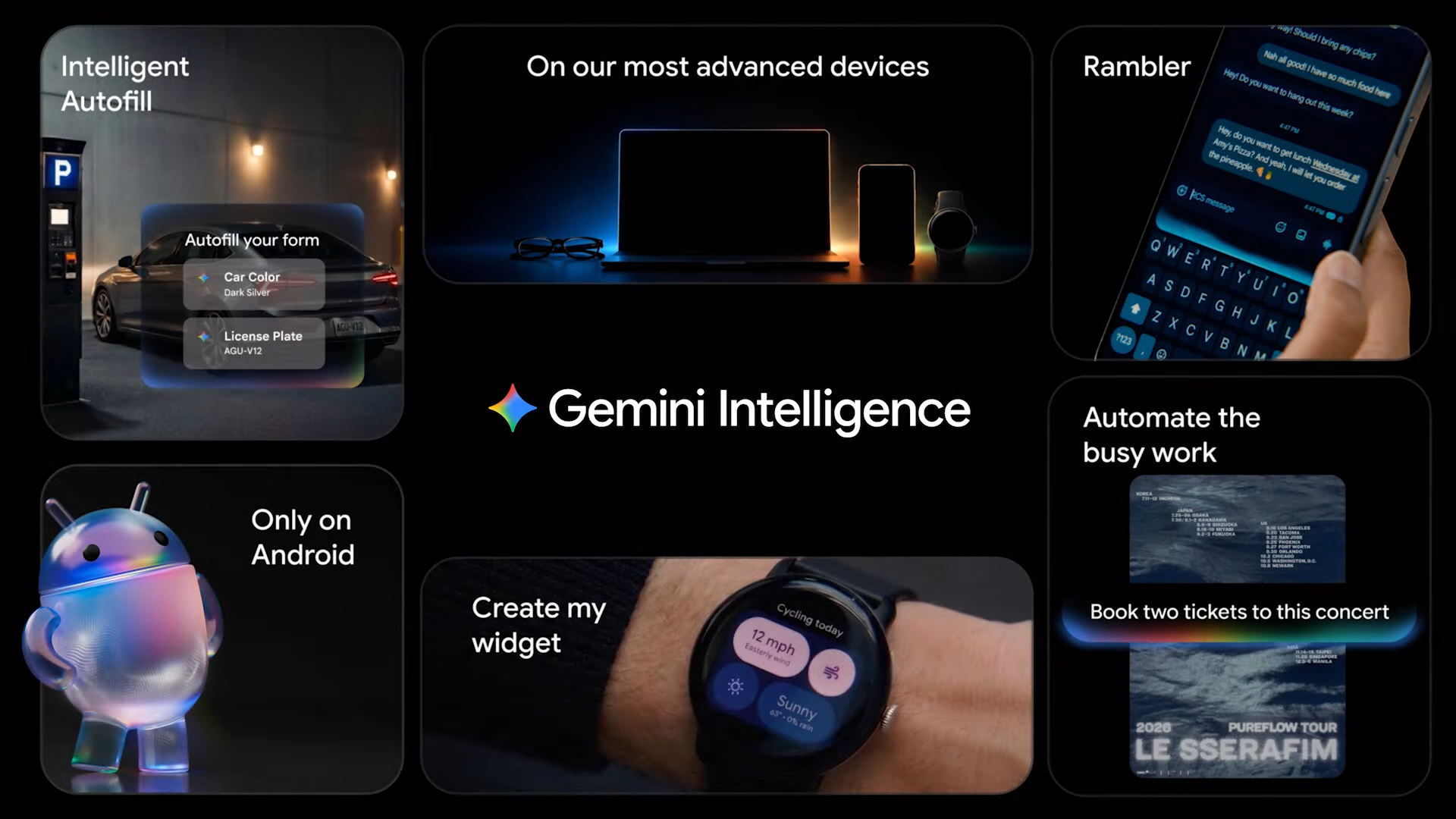

Google has already hinted at this through its new “Gemini Intelligence," which the company says will power advanced AI experiences across phones, watches, cars and other devices.

Reports from 9to5Google, suggest some of these features could debut first on future Samsung Galaxy devices including the Galaxy Z Fold 8.

Your phone may start acting more like an AI agent

Google appears to be building toward “agentic” Android devices that can take action instead of simply responding to commands. Rather than constantly opening apps and searching manually, your device may increasingly surface what you need before you even think about it.

Additionally, Google is also trying to solve the privacy problem. One reason this rollout could resonate with users is that Google reportedly says many of these Contextual suggestions happen locally on-device in an encrypted environment rather than entirely in the cloud.

That’s important because one of the biggest concerns around AI assistants is how much personal data they collect and process remotely. If Android can anticipate behavior while keeping more processing on-device, Google may be able to position these features as faster, more personalized and less dependent on cloud serves while also being more private.

That mirrors a broader industry trend toward local AI processing, where phones increasingly use onboard AI hardware instead of relying entirely on remote data centers.

Bottom line

Google’s latest Android direction points toward predictive computing. Instead of interacting with AI occasionally, users may soon live inside AI-powered operating systems that continuously adapt to behavior, context and routines in real time.

And if Google succeeds, Android phones could change the way we use smartphones forever.

More from Tom’s Guide

- I used Gary Vee’s ‘attention is currency’ mindset with ChatGPT — and it saved my weakest idea

- I asked ChatGPT to apply Lewis Howes’ ‘Greatness’ mindset to my life — and it completely changed how I approach work

- I gave ChatGPT permission to disagree with me with this prompt — and its responses became dramatically better