Hand movements are thought to be the genesis of human communication, which is why scientists, creatives and designers have been obsessed with capturing human motion

Hand waving gets a bad rap – used to describe vague approximations or being out of your depth. But if you’re worried about gesturing too wildly in a meeting, just remember – hand waving actually makes you smarter, better educated and way more popular. It’s the best way to go viral – and it’s at the heart of being human.

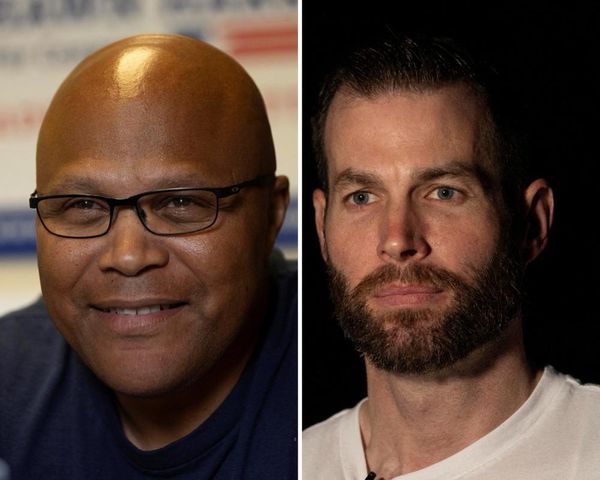

Back in 2015, researchers in Portland, Oregon looked at the full range of TED Talks – the 18-minute online videos by experts in everything from technology to creativity – and compared the number of hand gestures a speaker used with the popularity of their talk. The resultswere surprising – the least popular speakers with the least shared videos used an average of 272 hand gestures during their presentation, while the most popular used almost twice that at 465 gestures per talk.

Hand wavers are seen as warm, agreeable and energetic, while kids who use more hand gestures when very young have larger vocabularies growing up. According to Suzanne Aussems at the University of Warwick, hand gestures were the first human language – no one has been able to teach chimpanzees to talk, but they’ve been teaching them sign language since the 1960s.

Perhaps it’s because gestures are so fundamental that scientists, engineers and science fiction writers have been obsessed with technology that captures them. Isaac Asimov first imagined a robot controlled by hand gestures in 1940, with the short story Strange Playfellow. These ideas travelled hand-in-hand, if you’ll forgive the pun, with anthropologists studying gestures and breaking them down into four basic types – conversational, communicative, manipulative, and controlling.

The use of hand-gesture technology in cars like the Volkswagen Touareg has made secondary tasks, such as operating the radio, far less distracting – making it easier to concentrate on the primary task of driving.

Scientists have been working on reproducing the functions of hand gestures since the creation of the Sayre Data Glove prototype in 1977 at the University of Illinois at Chicago, but computers weren’t able to handle the amount of data involved until 1995, when the Pinch glove by Fakespace was launched as the first commercially available gesture-based interface. Even then, systems were slow and measuring an ungloved hand moving in three dimensions was impossible.

“We humans make very rapid movements when we use our hands, much more so than other parts of our body, which is very hard for technology to capture,” explains Tae-Kyun Kim at Imperial College’s Department of Electrical Engineering. “Moving fingers and movements in the palm can cause distortions. Our hand also contorts in a range of different shapes, which is another challenge to overcome.”

This enormous complexity of movement is why gesture-capturing technology used in film special effects – from Disney’s Rotoscope to the motion capture used in Lord of the Rings – was so difficult to develop. Rotoscope was, essentially, a time-consuming process involving animators tracing over film of human actors, which debuted with Snow White in 1937. Modern motion capture borrows from medical industry techniques for studying joint-related illnesses – it was deployed in video games in the mid-1990s and at the point Andy Serkis first dressed up as Gollum it still required laboratory style conditions with each “mocap-ready” camera roughly the size of a small refrigerator.

Inevitably, when thinking about hand-gesture technology, the movie that first comes to mind is Minority Report – Steven Spielberg’s 2002 sci-fi film set in 2054 and featuring a computer interface that the director imagined as “like conducting an orchestra”. Keen to make his vision of the future as accurate as possible, Spielberg recruited a 15-strong expert panel under MIT scientist John Underkoffler to produce a realistic tech bible.

The team were riding a wave. About the same time, Carnegie Mellon University’s (CMU) Human-Computer Interaction Institute prototyped a gesture-based system to handle so-called “secondary tasks” in a car – using the stereo, for example. “When a person is engaged in a cognitively demanding task, like driving, any secondary activity that demands their attention is potentially dangerous,” the Carnegie Mellon team argued. “A more usable system is a safer system, by requiring less attention, demanding less time, or causing less confusion.”

It’s the kind of gesture control tech that has now been incorporated into the in-car infotainment system in the new Volkswagen Touareg’s Innovation Cockpit, which allows you to scroll easily, for example, through groups of preset radio stations or when you want to navigate through two home screens to display an alternative set of customised information. It’s a use of technology that makes those “secondary activities” as simple, and as safe, as possible.

Karolina Sobecka’s interactive shop front installation Sniff: as the viewer walks by the projection of a dog follows, dynamically responding to gestures.

If CMU was into controlling, creatives were into communicating. In 2009, artist Karolina Sobecka debuted Sniff – a digital dog in a Brooklyn shop window that detected and followed passers-by, reacting to their hand gestures to decide if they were “friendly” or “hostile”. Pure hand-gesture technology reached the living room via Microsoft’s Kinect, released as an add-on to its Xbox gaming system using a camera to record 2D movement and an infrared beam to measure depth. It’s some variant of the Kinect system that’s at the heart of most industrial robot gesture controls.

Finally realising Asimov’s 1940 dream, 2017 saw DJI launch Spark, a tiny drone controlled by hand gestures designed to follow its owner and take a selfie of them when their hands framed their face in a particular way. Which is where the cuddly gesture tech of pop culture starts to overlap with darker systems and morally complicated alternatives.

Speaking at Wired’s 2017 conference, General Sir Richard Barrons, former commander of joint forces command, explained why gesture control was currently the focus of military research. “Right now, you blow a hole in a terrorist compound wall and send through a 20-year-old and a dog,” he pointed out. “In the future a machine will go through that breach first. If that machine is autonomous and is applying lethal force based on its algorithm, it may not only kill the terrorist, it may also kill his children.” Gesture-based tech ensures human decision-making stays central to the battlefield. Like the car radio, it’s about control and safety – it is just safety on an entirely different scale.

DJI’s aerial photography drone Spark is controlled by hand gestures.

The US military has prototyped robotic mules that respond to specific army hand signals – like stop, slow down, prepare to move and freeze – while aircraft carrier-based autonomous drones can be brought in to land using classic aircraft control hand signals. Given the mayhem of a battlefield, the need for line of sight and the ability to recognise friend and foe, camera-based tech is slowly being replaced by electromyography (known as EMG) activity recorders – such as the JPL Biosleeve, which uses electrodes placed on the arm that measure nerve signals and can be used to open and close robotic hands. A 2016 report by the US Army Research Laboratory pointed out one major concern in an age of cyberwarfare – how to ensure your robots don’t get hacked?

At the cuddly end of life-or-death robotics – a sentence you rarely encounter – there’s the ANYmal disaster rescue robot. You’ll have already been freaked out by videos of the ANYmal using a lift, but the key use of the mechanical beast in search and rescue requires an armband-based gesture-control system. It’s going to take some time before rescue robots can head out to sea and, powered by their own cameras, be able to make that most human of decisions as defined by the British poet Stevie Smith – which of the swimmers is waving, and which of them is drowning? For the time being, only poetry has the answer.

Photography: Jonny Storey, Karolina Sobecka, Courtesy of Fake Space, Getty Images