The last few weeks have not been good for Facebook, ever since the Wall Street Journal started publishing on September 13 the Facebook Files. Last week, former Facebook employee and whistleblower Frances Haugen came out on 60 Minutes and in testimony to Congress to declare that this documentary evidence shows how Facebook prioritizes profits at the risk of spreading misinformation and harmful content. This hurts Facebook's face and its books; its public image has been damaged and the stock has fallen 13% since it reached an all time high in early September.

On Sunday, Facebook’s VP of Global Affairs, Nick Clegg, defended Facebook on NBC’s Meet the Press, alluding to the 40,000 people it has employed and the $13 billion it has spent fighting misinformation and hate speech. How did Facebook get to this point, despite the massive resources it claims it has deployed? Is there any hope social media platforms can stay out of trouble? I think there is a chance, if social media companies draw a clear line drawn between social platforms and news platforms.

The Problem

Facebook has never shied away from being a news source. The feed itself is called ‘News Feed’, and it has become the de facto term for content feeds across social media platforms. Facebook has its own News app that is prominent on its menu and is working on a self-publishing news platform. Regardless of its strategic intent, the market sees Facebook’s feed as a prime source of news. According to Pew Research, about one half of Americans use social media regularly as a source of news and one third of Americans do so on Facebook.

And therein lies the problem. Social media platforms are meant to enable social interaction and community building, and naturally you will see the good and the bad of society reflected. My colleagues Doreen Shanahan and Cristel Russell from the Pepperdine Graziadio Business School worked with Ana Babic Rosario from the University of Denver to research the nature of social interaction on Facebook groups. Shanahan states: “We found that social pressure in Facebook groups is paradoxical. It can be beneficial when it contributes to members' feeling of empathy, leading to greater informational value. But social pressure packaged in harsh comments can generate angst and negative social dynamics which are detrimental to the community.”

A social media platform that is also viewed as a source of news will be held liable by users, government, and society for bad outcomes, at least more so than those that are not viewed that way. But based on this research, odds are low that Facebook can effectively curate the information flows and social dynamics of its 3 billion users for positive outcomes. Russell reflects: “If anxiety and stress emerge within social groups that have affinity and common interests and goals, imagine what happens in the wild west of news feeds, likes, and comments. People need to realize that, as in any other social setting, we become vulnerable when we engage with others, share our own experiences, and react to others’. Facebook is an enabler of social interaction on a mass level so the vulnerability risks are proportional to its massive size.”

Positioning a social media platform as a news source can contribute to negative outcomes as users tend to believe what is posted in the platform, regardless of the source. Also, news feeds tailored to our preferences, likes, and friends are inherently biased and can become echo chambers of what we want to believe in, which can have detrimental social effects. These echo chambers have led to the legitimization of misinformation and to the political polarization we see today.

The Solution

So what can Facebook do to get out of this mess? It could try to get better at fact-checking content as a news platform would, but that seems expensive and unattainable since much of the content is user-generated and spread through word of mouth. Rather, I think Facebook can shift strategically to follow other social media platforms that have stayed away from the news lane. Tik Tok and Twitch, for example, are only used regularly for news by 6% and 1% of Americans, respectively.

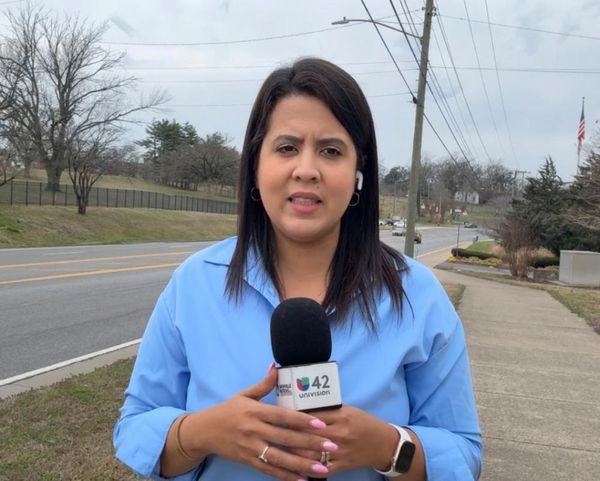

How can Facebook make this strategic shift? Theresa de los Santos, journalism professor at Pepperdine’s Seaver College, hints at the solution: "It is problematic that a business designed to attract attention has grown into a leading source of ‘news’ information. There is a difference between a person sharing a news article from a reputable news organization or journalist and someone sharing fringe content or their personal experience or opinion as news. This is a news literacy issue."

Facebook can leverage all its platforms including Instagram to proactively deploy news literacy campaigns against misinformation. It can deliberately offer the disclaimer that its news feed alone is not a reliable and unbiased source of news, or at least warn readers to check their sources. The social impact would be very positive, and to the benefit of Facebook’s shareholders, it would preempt the continuous PR crises and save billions of dollars that would be spent to address the issue. Content monitoring resources can then be shifted to enforcing its community standards against crime, bullying, and other negative social behavior, a win-win for society and for Facebook’s reputation.

In the end, I predict Facebook will decide in favor of long-term profits, because that is what Facebook’s executives are paid to do. As a business and tech enthusiast, I don’t find profit seeking from tech surprising. What surprises me is how often companies make growth moves for growth’s sake, discounting the possible negative consequences. Social media companies may be better off by staying away from a news role and instead focusing on their role as social enablers. For Facebook, it’s worth considering, to save face while improving the books.