Screenshots, spreadsheets, and frantic Slack pings dominate manual SOC 2 prep. In a 2023 compliance survey, nearly 70% of service organizations reported juggling six or more frameworks, turning evidence gathering into a year-round chore. Plugging a compliance platform into AWS, GitHub, Okta, and your HRIS pulls timestamped proof around the clock and frees up as much as 80 percent of audit-prep hours. In this guide, you’ll see how that automation works, where it removes the most pain, and where human review still matters.

Why manual evidence collection hurts

Auditors need evidence that spans the entire review window: daily CloudTrail logs, onboarding checklists, backup reports, Jira tickets, and more. A one-off screenshot will never satisfy them.

Collecting that proof by hand is expensive. While specific hourly savings vary, teams that rely on manual evidence collection with spreadsheets and screenshots face a time-intensive process for a single Type II audit. According to Vanta's compliance management software guide, automation can cut that effort by up to 82% through direct evidence pulled from your systems.

The pain grows with scope. A significant percentage of service companies now juggle six or more frameworks, meaning the evidence collection process is repeated multiple times per year for frameworks like SOC 2, ISO 27001, and others.

Manual handoffs also hide errors. A stale IAM CSV can miss a lingering admin account, and a mis-filtered Jira report can drop two incident tickets. Auditors spot the gaps, send follow-ups, and the clock resets.

Morale suffers too. Engineers dread “screenshot season,” and compliance managers stitch files together in a folder called Final_v4_last-draft. Hundreds of hours, frayed nerves, and missed launch dates turn manual collection into a pressure cooker—one that pushed the industry toward automation.

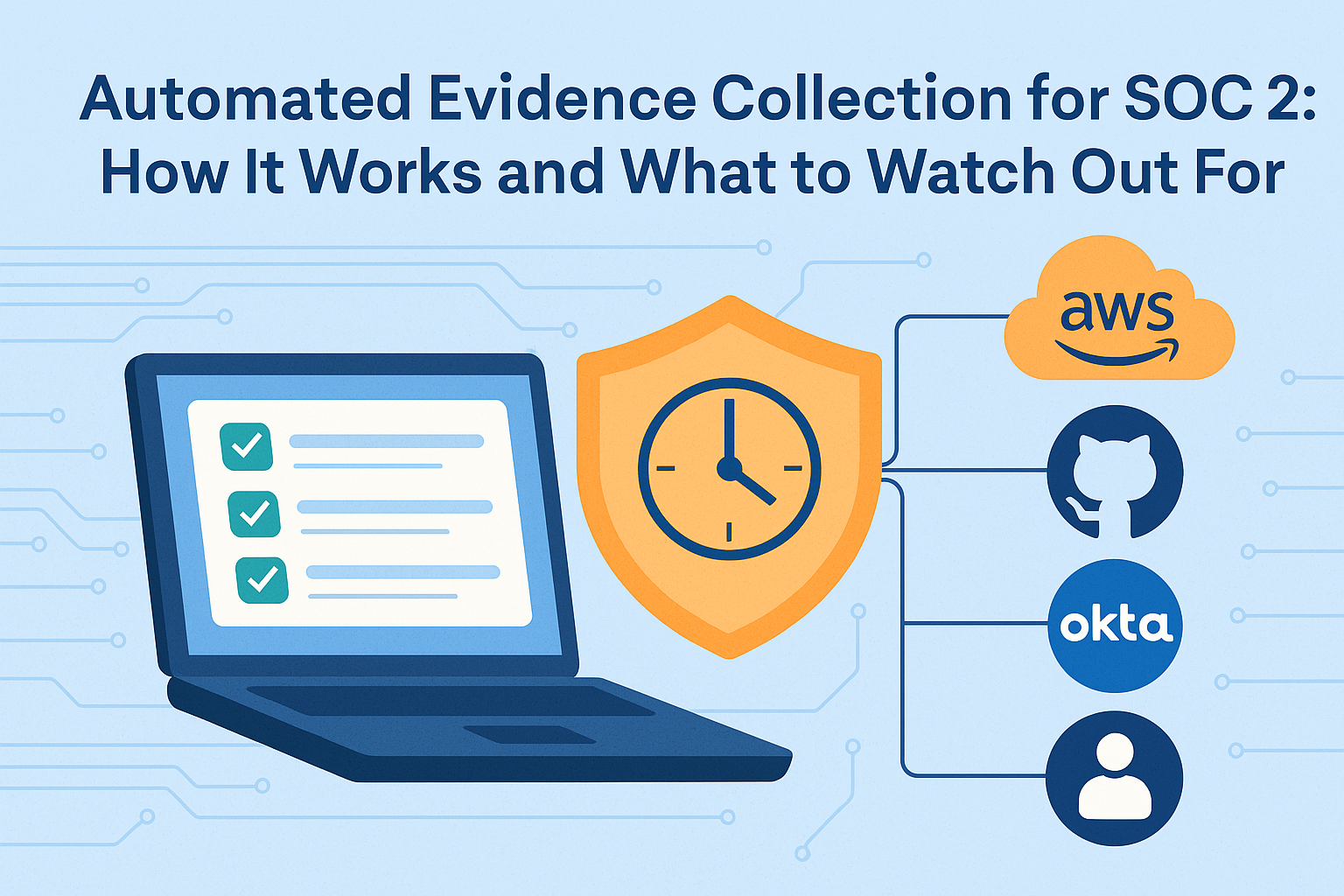

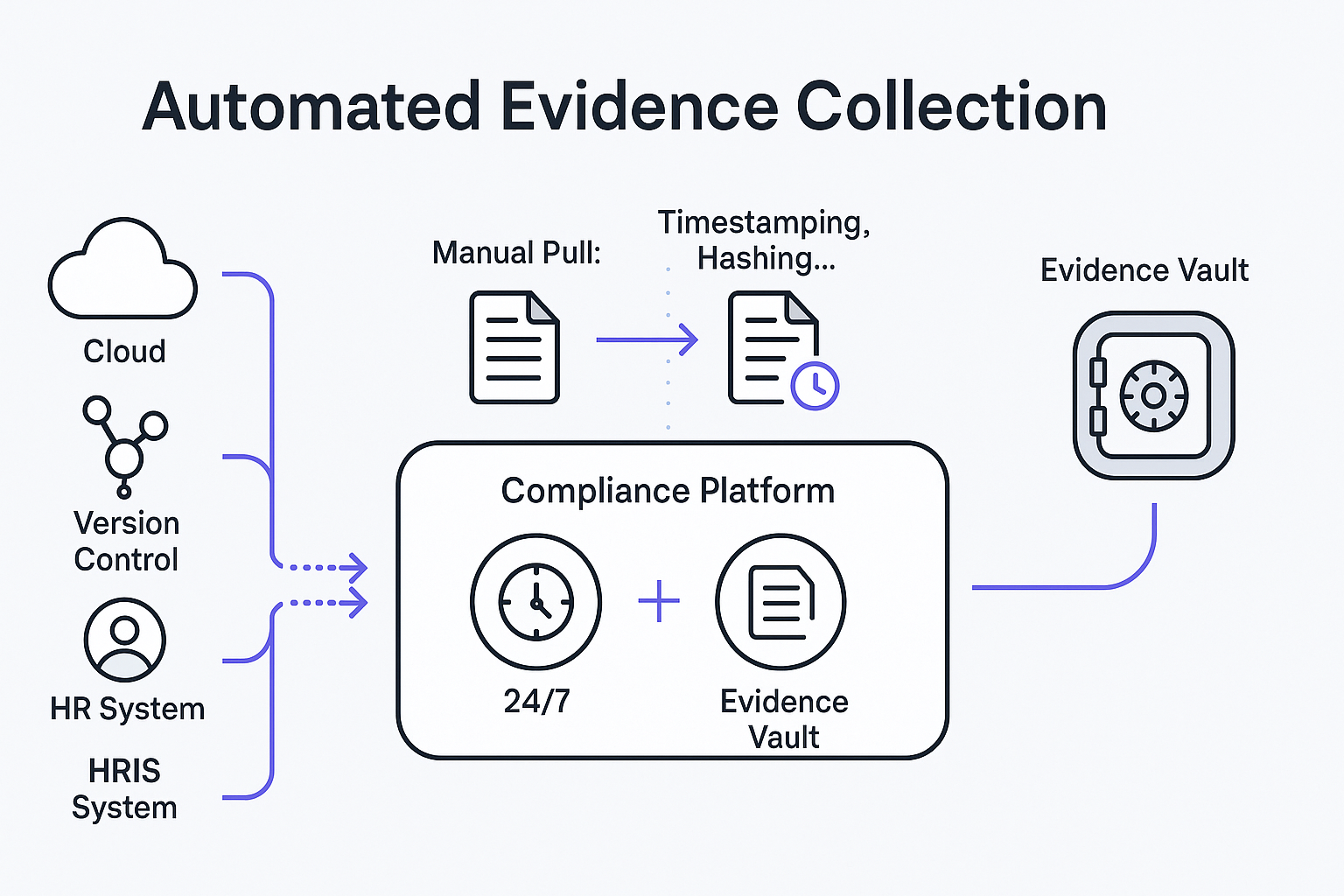

How automated evidence collection works

Integration with your tech stack

Automation starts with a read-only, least-privilege connection to your stack—AWS, Azure, GitHub, Okta, Jira, HRIS, and more—via API keys and pre-built connectors that sync at least daily (and often every few minutes for critical cloud checks). Each sync ingests configs, user lists, and logs, tagging every data point with its source and timestamp, so if an S3 bucket appears at 2:14 a.m., its encryption state is captured before business hours. That continuous feed powers downstream tests, alerts, and the encrypted evidence vault auditors rely on later.

Control mapping and automated tests

Once data flows, the platform maps each record to a specific SOC 2 control—something spreadsheets cannot do for thousands of rows per hour.

Each control is a yes-or-no question: Is MFA enabled for all cloud admins? Are S3 buckets encrypted? Did every new hire finish security training within 30 days? The platform ties those questions to live data and re-scores them throughout the day.

A green check means the evidence meets the rule; red X flags drift the moment it happens. The dashboard shows pass-fail status in real time, so you know—rather than guess—where you stand.

Continuous monitoring and real-time alerts

Controls rarely stay green forever. A new engineer might join before finishing training, and your 100 percent metric drops to 98 percent.

Always-on monitoring closes that gap. Tools such as Vanta run 1,200+ automated tests every hour and push failed results to Slack or open a Jira ticket, typically within 10 minutes of detection.

Because alerts land while the issue is still small, the fix is quick: enable MFA for the new admin, re-encrypt an open bucket, or nudge the hire to finish Security 101. Instead of retroactive cleanup, you practice continuous hygiene.

Central evidence repository

All logs, screenshots, and config snapshots land in a single AES-256–encrypted vault with role-based access controls. Each item carries a “birth certificate” (source, method, timestamp, and hash), so auditors can quickly verify authenticity without repeat requests. A built-in evidence library lets you search by keyword, filter by type or date, and export large ZIPs in one click—type “S3 encryption,” set a date range, and you get a clean, ready-to-share bundle instead of digging through endless “final” folders.

Auditor access and reporting

When audit season hits, you don’t trade thirty attachments or spin up a new share drive. Instead, the platform lets you hand auditors either:

- an auto-generated ZIP or PDF that lists each control, its latest evidence, and the metadata proving authenticity

- a read-only auditor portal, where examiners can drill into evidence, leave comments, and pull their own samples

Real-world impact: one B2B SaaS company cut its SOC 2 fieldwork from one month to seven days after switching to an automated auditor portal, trimming audit costs by roughly 70 percent. Transparent evidence lets auditors trace every finding back to source in minutes, which slashes follow-up questions and keeps schedules predictable.

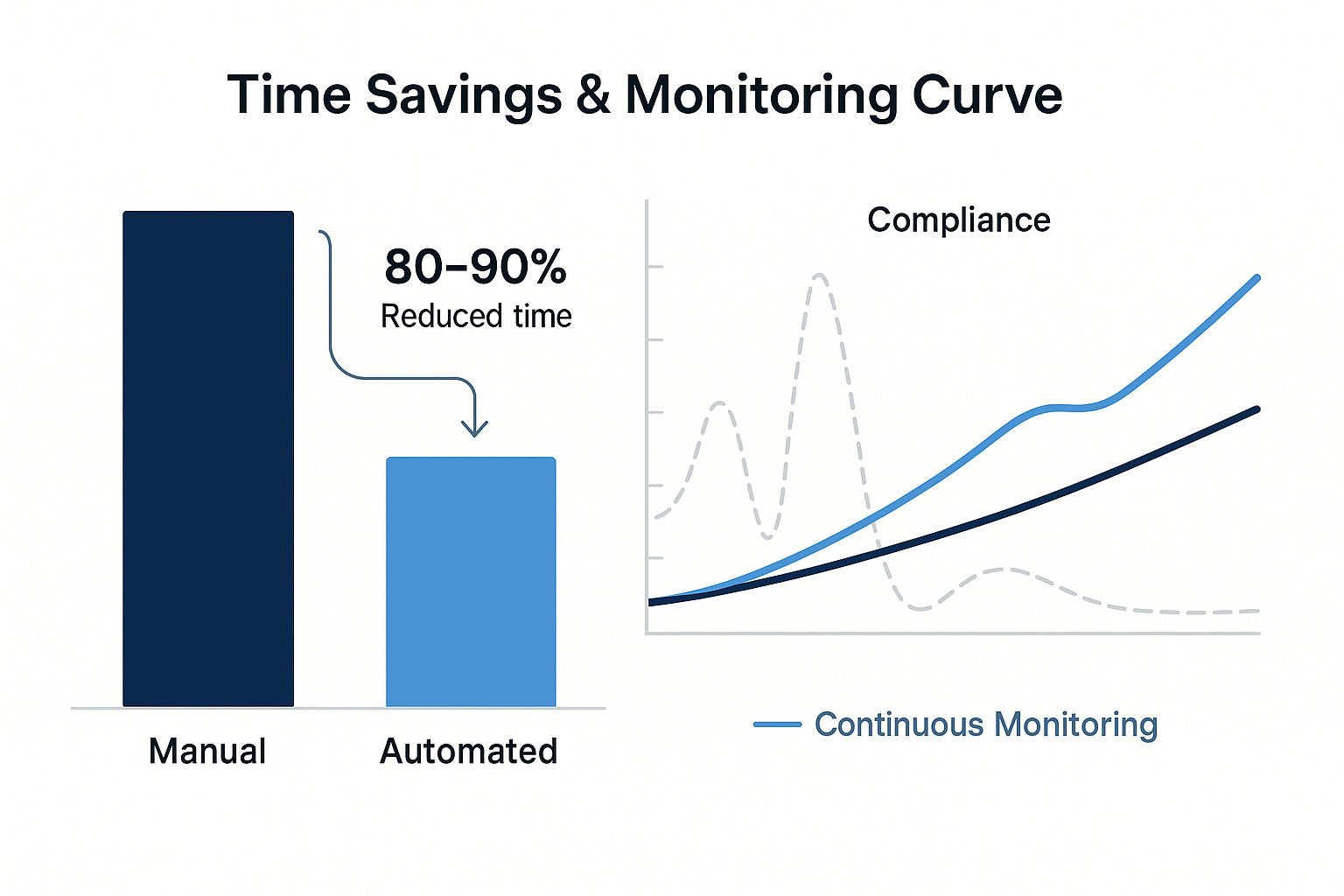

Key benefits of automation

Swapping screenshots for API calls frees big blocks of engineer and GRC time. Teams with automated evidence collection often see 80–90% less effort per framework versus spreadsheet-heavy workflows. The platform pulls fresh data automatically, so releases don’t pause for manual exports and the evidence library stays current.

Automation also turns compliance from a yearly fire drill into a steady, monitored rhythm. Platforms run hundreds or thousands of tests on an hourly or near-real-time schedule, pinging Slack or opening Jira tickets within minutes when an S3 bucket loses encryption or a new hire misses training. The fix becomes fresh evidence, so posture stays stable instead of spiking before audits.

Because evidence comes directly from cloud APIs and identity stores—stamped with timestamps and hashes—accuracy and completeness improve. Auditors can validate integrity in seconds, cutting “Is this the right log?” back-and-forth and re-pulls. Some security teams have cut prep time from six weeks to two by relying on continuously hashed, centrally organized evidence.

Implementing automated evidence collection

1. Define clear, testable controls

Automation only works when the platform can score a control as pass or fail. For every item, write a quantified objective tied to a recognized standard:

- Backups: “All production S3 buckets use versioning and server-side encryption (CIS AWS Benchmark v2.0 §2.1).”

- Training: “Every new hire completes security training within 30 days of the start date, and completion is logged in the LMS.”

- MFA: “MFA is enabled for every Okta user; any exception expires after 24 hours.”

These crisp rules let the platform evaluate evidence without human judgment, trigger alerts only when real drift occurs, and generate unambiguous proof for auditors.

2. Choose a platform with real integration depth

A logo grid looks nice, but depth is what matters. Ask three specifics before you sign:

- Scope. How many data points does the AWS connector pull? Look for platforms that ingest configuration from core security and monitoring services (for example, Security Hub, GuardDuty, Config, Inspector, Macie) across all linked AWS accounts in one workspace.

- Cadence. How often does the tool refresh? Cloud checks for critical services should sync at least every few minutes or hourly, and the rest at least daily.

- Latency. How fast does a failed control appear on the dashboard? Run a sandbox test: disable MFA for one user and confirm the alert lands within 30 minutes.

Depth beats breadth because shallow connectors lead to manual exports—the work that always resurfaces at audit time. Confirm an open API exists for edge systems, and document any blind spots upfront so they never surprise you later.

3. Turn alerts into action (and keep humans in the loop)

Automation flags issues in minutes, but people still close the loop. Lock in three elements before go-live:

- Ownership. Assign each alert type to a team with a target SLA. Swimlane’s 2025 study found 68 percent of companies take more than 24 hours to remediate critical findings, mostly because no one knew who owned the task.

- Channel. Send failed controls to the tools your team already checks—Slack, PagerDuty, or Jira—not a lonely inbox.

- Cadence. Hold a 10-minute weekly compliance stand-up to review fresh failures, track fixes, and tune rules.

Automation is an assistant, not the auditor of record. Assign an owner to review the dashboard each month: spot-check a few passes and fails, open the raw evidence, and confirm it matches reality. Keep the tool current—if your password policy moves from eight to twelve characters, update the platform’s test the moment you publish the change. A quarterly mock audit (export the bundle, walk through it line by line) keeps people in the loop and real audits on track.

4. Mind integration gaps, data scope, and evidence privacy

No platform covers every corner of your stack. The average enterprise runs 275 SaaS apps, yet only 26 percent of that spend is centrally managed. Map each control to a data source; if a connector is missing or shallow, choose between:

- feeding data through the open API

- documenting a manual pull

Re-check the map each quarter because new shadow apps appear faster than org charts change.

Your compliance vault also stores sensitive configs and employee records, so treat it like production:

- Ensure evidence is AES-256 encrypted at rest and TLS 1.2+ in transit.

- Enforce SSO and MFA for every user. Grip Security’s 2025 report shows that among popular AI applications with SAML capabilities, 80 percent are not properly managed or federated.

- Apply role-based access so auditors see only what they need, and mask PII before ingestion.

Avoid over-collection. For background checks, store only the pass/fail certificate, not the full report. Less data means a smaller blast radius if anything ever slips.

What’s next: AI and always-on compliance

AI is making compliance with smarter data, not more integrations. Platforms now triage failed controls, summarize evidence, and power Continuous Controls Monitoring so SOC 2 becomes a 24/7, live scorecard instead of an annual scramble. DevOps teams are shifting compliance left with policy-as-code and IaC checks that block risky changes and log them as evidence. Regulators are catching up with new cyber security laws, accepting software-collected evidence while still demanding data minimisation, transparency, and human oversight.

Conclusion

Automated evidence collection doesn’t change what SOC 2 requires—only how hard it is to meet those requirements. Instead of screenshots and last-minute fire drills, you get a steady stream of timestamped proof that controls are working, as long as you still define clear, testable controls, know which systems produce which evidence, and have people ready to respond to alerts. When those pieces align, automation slashes prep time, reduces the risk of missed evidence, gives auditors a cleaner trail, and turns continuous collection into infrastructure for trust—making SOC 2 less of a hurdle and more of a lasting business asset.

FAQ

1. What is automated evidence collection in the context of SOC 2?

It is the practice of connecting a compliance platform to your cloud, identity, HR, and ticketing systems so it can pull logs, configurations, and records on a schedule. Instead of manually exporting data for each control, the platform gathers and organizes that proof for you and keeps it tied to specific SOC 2 requirements.

2. Does automation replace auditors or compliance teams?

No. Automation replaces repetitive collection work, not professional judgment. You still need auditors to test controls and issue the SOC 2 report, and you still need internal owners who define control objectives, respond to alerts, and verify that automated checks match reality.

3. How long does it take to implement an automated evidence platform?

Setup usually takes days: connect core systems, map controls, and enable prebuilt tests. The heavier lift is refining controls, assigning owners, and tuning alerts—often starting small and expanding coverage over a quarter or two.

4. What should I look for when choosing an automation tool for SOC 2?

Focus on integration depth and operational fit: the platform should connect to your existing tools, pull the exact data points auditors need, update on a useful cadence, and surface issues where teams already work (e.g., Slack or Jira). It must also offer strong access controls, encryption, and data residency options to protect sensitive evidence.