Researchers have figured out a way to transform a few dozen pixels into a high resolution image of a face using artificial intelligence.

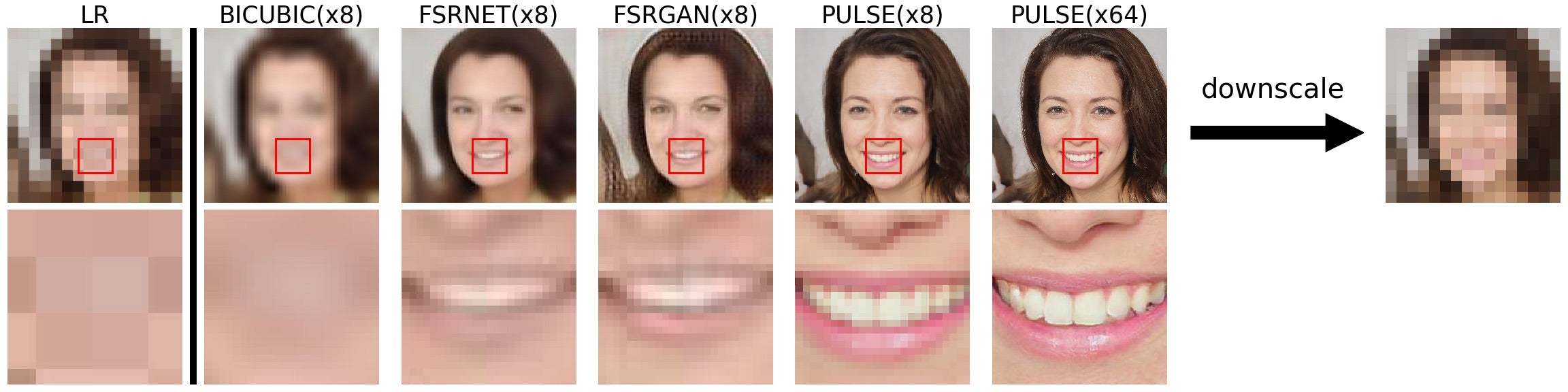

A team from Duke University in the US created an algorithm capable of "imagining" realistic-looking faces from blurry, unrecognisable pictures of people, with eight-times more effectiveness than previous methods.

"Never have super-resolution images been created at this resolution before with this much detail," said Duke computer scientist Cynthia Rudin, who led the research.

The images generated by the AI do not resemble real people, instead they are faces that look plausibly real. It therefore cannot be used to identify people from low resolution images captured by security cameras.

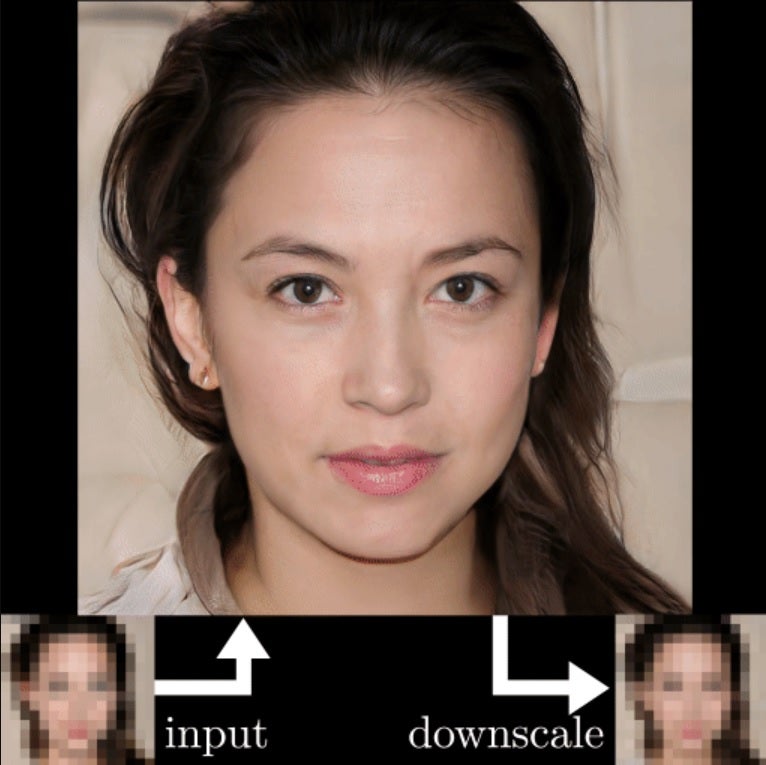

The PULSE (Photo Upsampling via Latent Space Exploration) system developed by Dr Rudin and her team creates images with 64-times the resolution than the original blurred picture.

The PULSE algorithm is able to achieve such high levels of resolution by reverse engineering the image from high resolution images that look similar to the low resolution image when down scaled.

Through this process, facial features like eyelashes, teeth and wrinkles that were impossible to see in the low resolution image become recognisable and detailed.

"Instead of starting with the low resolution image and slowly adding detail, PULSE traverses the high resolution natural image manifold, searching for images that downscale to the original low resolution image," states a paper detailing the research.

"Our method outperforms state-of-the-art methods in perceptual quality at higher resolutions and scale factors than previously possible."

The system could theoretically be used on low resolution images of almost anything, ranging from medicine and microscopy, to astronomy and satellite imagery.

This means noisy, poor-quality images of distant planets and solar systems could be imagined in high resolution.

The research will be presented at the 2020 Conference on Computer Vision and Pattern Recognition (CVPR) this week.