Let’s start with a simple mystery: What happened to original blockbuster movies?

Throughout the 20th century, Hollywood produced a healthy number of entirely new stories. The top movies of 1998—including Titanic, Saving Private Ryan, and There’s Something About Mary—were almost all based on original screenplays. But since then, the U.S. box office has been steadily overrun by numbers and superheroes: Iron Man 2, Jurassic Park 3, Toy Story 4, etc. Of the 10 top-grossing movies of 2019, nine were sequels or live-action remakes of animated Disney movies, with the one exception, Joker, being a gritty prequel of another superhero franchise.

Some people think this is awful. Some think it’s fine. I’m more interested in the fact that it’s happening. Americans used to go to movie theaters to watch new characters in new stories. Now they go to movie theaters to re-submerge themselves in familiar story lines.

A few years ago, I saw this shift from exploration to incrementalism as something specific to pop culture. That changed last year when I read a 2020 paper on the decline of originality in science, with a decidedly non-Hollywood title: “Stagnation and Scientific Incentives.”

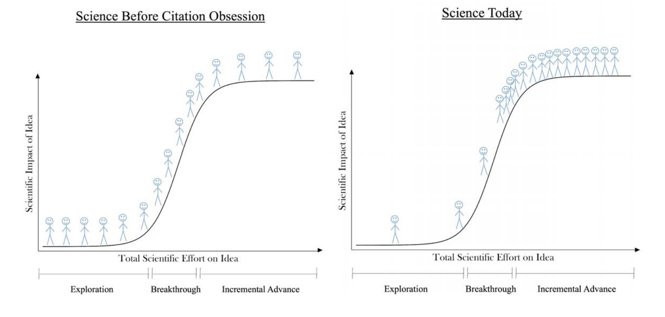

“New ideas no longer fuel economic growth the way they once did,” the economists Jay Bhattacharya and Mikko Packalen wrote. In the past few decades, citations have become a key metric for evaluating scientific research, which has pushed scientists to write papers that they think will be popular with other scientists. This causes many of them to cluster around a small set of popular subjects rather than take a gamble that might open a new field of study. To illustrate this shift, the co-authors included a simple drawing in their paper:

I remember exactly what I thought when I first saw this picture: Hey, it looks like Hollywood! Driven by popularity metrics, scientists, like movie studios, are becoming more likely to tinker in proven domains than to pursue risky original projects that might bloom into new franchises. It’s not that writers, directors, scientists, and researchers can’t physically come up with new ideas. But rather that something in the air—something in our institutions, or our culture—was constraining the growth new ideas. In science, as in cinema, incrementalism is edging out exploration.

I couldn’t get the thought out of my head: Truly new ideas don’t fuel growth the way they once did. I saw its shadow everywhere.

In science and technology: “Everywhere we look we find that ideas are getting harder to find,” a group of researchers from Stanford University and MIT concluded in a 2020 paper. Specifically, they concluded that research productivity has declined sharply in a number of industries, including software, agriculture, and medicine. That conclusion is widely shared. “Scientific knowledge has been in clear secular decline since the early 1970s,” one pair of Swiss researchers put it. The University of Chicago scholar James Evans has found that as the number of scientific researchers has grown, progress has slowed down in many fields, perhaps because scientists are so overwhelmed by the glut of information in their domain that they’re clustering around the same safe subjects and citing the same few papers.

In entrepreneurship: Setting aside a spike during the coronavirus pandemic, U.S. business formation has been declining since the 1970s. One of America’s most important sources of entrepreneurship is immigration—because immigrants are far more likely than native-born Americans to start billion-dollar companies—but the U.S. is in a deep immigration depression at the moment.

In institutions: Until about a century ago, the U.S. was building top-flight colleges and universities at a dazzling clip. But the U.S. hasn't built a new elite university in many decades. The federal government used to build new agencies to deal with novel problems, like the National Institutes of Health and the Centers for Disease Control and Prevention after World War II, or the Advanced Research Project Agency (later known as DARPA) after Sputnik. Although the pandemic embarrassed the CDC, no major conversations are under way about creating new institutions to deal with the problem of 21st-century epidemics.

[Derek Thompson: America needs a new scientific revolution]

If you believe in the virtue of novelty, these are disturbing trends. Today’s scientists are less likely to publish truly new ideas, businesses are struggling to break into the market with new ideas, U.S. immigration policy is constricting the arrival of people most likely to found companies that promote new ideas, and we are less likely than previous generations to build institutions that advance new ideas.

“What about all the cool new stuff?” you might ask. What about the recent breakthroughs in mRNA technology? What about CRISPR, and AI, and solar energy, and battery technology, and electric vehicles, and (sure) crypto, and (yes!) smartphones? These are sensational accomplishments—or, in many cases, the promises of future accomplishments—punctuating a long era of broad technological stagnation. Productivity growth and average income growth have declined significantly from their mid-20th-century levels.

New ideas simply don’t fuel growth the way they once did. Imagine going to sleep in 1875 in New York City and waking up 25 years later. As you shut your eyes, there is no electric lighting. There are no cars on the road. Telephones are rare. There is no such thing as Coca-Cola, or sneakers, or basketball, or aspirin. The tallest building in Manhattan is a church.

When you wake up in 1900, the city has been entirely remade with towering steel-skeleton buildings called “skyscrapers” and automobiles powered by new internal combustion engines. People are riding bicycles, with rubber-soled shoes, in modern shorts—all inventions of this period. The Sears catalog, the cardboard box, and aspirin are new arrivals. People recently enjoyed their first sip of Coca-Cola, their first Kellogg’s Corn Flakes, and their first bite of what we now call an American hamburger. When you fell asleep in 1875, there was no such thing as a Kodak camera, or recorded music, or an instrument for capturing motion pictures for film projection. By 1900, we have the first version of all three — the simple box camera, the phonograph, and the cinematograph. As you slept, Thomas Edison unveiled his famous light bulb and electrified parts of New York.

It’s been a golden age for building institutions as well. Johns Hopkins University, Stanford, Carnegie Mellon University, and the University of Chicago were all founded while you were zonked. In the 1870s, a few Ivy League colleges messed around with rugby and haphazardly invented the sport of football. In 1891, James Naismith, a YMCA instructor in Massachusetts, erected a peach basket in a gymnasium and invented the game of basketball. Four years later and just 10 miles away at another YMCA, the physical-ed teacher William Morgan braided the serve from tennis and elements of team passing from handball to create volleyball.

How can you not be astonished and thrilled by the fact that all of this happened in 25 years? A quarter-century hibernation today would mean dozing off in 1996 and waking up in 2021. You would wonder at smartphones and the internet—marvelous inventions—but the physical world would feel much the same. Compare “cars have replaced horses as the best way to get across town” with “apps have replaced phones as the best way to order takeout.” If you believe in the virtue of novelty in the world of atoms, the golden years were a long time ago.

This is not the first time that somebody has accused America’s invention engine of running on fumes in the 21st century. (Not even the idea that America is running out of ideas is a new idea.)

In 2020, the venture capitalist Marc Andreessen published the instant-classic essay “It’s Time to Build,” which urged more innovation and entrepreneurship in public health, housing, education, and transportation. “The problem is inertia,” he wrote. “We need to want these things more than we want to prevent these things.” The same year, Ross Douthat published The Decadent Society, which levied similar criticisms of languishing U.S. creativity. These works are partly descendants of Tyler Cowen’s books The Great Stagnation, which diagnosed a slowdown in America’s innovative mojo, and The Complacent Class, which observed that Americans are self-segregating into comfortable echo chambers rather than taking risks and challenging themselves.

So what, exactly, is happening?

One explanation is that none of this is our fault. We picked all the low-hanging fruit, solved all the secrets, invented all the easy inventions, told all the good stories, and now it’s genuinely harder to keep up the pace of idea generation.

In some ways this is probably true. Science and technology are much more complicated than they were in the 1890s, or in the 1790s. But the fear that nothing is left to discover has always been misguided. In the mid-1890s, the U.S. physicist Albert Michelson famously claimed that “most of the grand underlying principles have been firmly established” in the physical sciences. It wasn’t even 10 years later that Albert Einstein first revolutionized our understanding of space, time, mass, and energy.

[Read: America’s toxic love affair with technology]

I don’t think there is an overarching reason for our novelty stagnation. But let me offer three theories that might collectively explain a good chunk of this complex phenomenon.

1. The big marketplace of attention

Almost every smart cultural producer eventually learns the same lesson: Audiences don’t really like brand-new things. They prefer “familiar surprises”—sneakily novel twists on well-known fare.

As the biggest movie studios got more strategic about thriving in a competitive global market, they doubled down on established franchises. As the music industry learned more about audience preferences, radio airplay became more repetitive and the Billboard Hot 100 became more static. Across entertainment, industries now naturally gravitate toward familiar surprises rather than zany originality.

Science is also a transparent marketplace of attention, and it is following the same trajectory as film and music. Scientists know what journals are publishing and what the NIH is funding. The citation revolution pushes scientists to write papers that are likely to appeal to an audience of fellow researchers, who tend to prefer insights that jibe with their background. An analysis of research applications found that the NIH and the National Science Foundation have a demonstrated bias against papers that are highly original, preferring “low levels of novelty.” Scientists are thus encouraged to focus on subjects that they already understand to be popular, which means avoiding work that seems too radical to focus on projects that are just the right blend of familiar and surprising.

The world is one big panopticon, and we don’t fully understand the implications of building a planetary marketplace of attention in which everything we do has an audience. Our work, our opinions, our milestones, and our subtle preferences are routinely submitted for public approval online. Maybe this makes culture more imitative. If you want to produce popular things, and you can easily tell from the internet what’s already popular, you’re simply more likely to produce more of that thing. This mimetic pressure is part of human nature. But perhaps the internet supercharges this trait and, in the process, makes people more hesitant about sharing ideas that aren’t already demonstrably pre-approved, which reduces novelty across many domains.

2. The creep of gerontocracy

We are living in an age of creeping gerontocracy.

Joe Biden is the oldest president in U.S. history. (If he had lost, Donald Trump would have been the oldest president in U.S. history.) The average age in Congress has hovered near its all-time high for the past decade. The Democrats’ House speaker and House majority leader are over 80. The Senate majority leader and minority leader are over 70. The fears and anxieties that dominate politics represent older Americans’ fears and fixations.

Across business, science, and finance, power is similarly concentrated among the elderly. The average age of Nobel Prize laureates has steadily increased in almost every discipline, and so has the average age of NIH grant recipients. Among S&P 500 companies, the average age of incoming CEOs has increased by more than a decade in the past 20 years. As I’ve written, Americans 55 and older account for less than one-third of the population, but they own two-thirds of the nation’s wealth—the highest level of wealth concentration on record.

Why does it matter that young people have a voice in tech and culture? Because young people are our most dependable source of new ideas in culture and science. They have the least to lose from cultural change and the most to gain from overthrowing legacies and incumbents.

The philosopher Thomas Kuhn famously pointed out that paradigm shifts in science and technology have often come from young people who revolutionized various subjects precisely because they were not so deeply indoctrinated in their established theories. One of the revolutionaries he mentioned, the physicist Max Planck, quipped that science proceeds “one funeral at a time” because new scientific truths thrive only when their opponents die and a new generation grows up with them. When this theory was rather literally put to the test in the 2016 paper “Does Science Advance One Funeral at a Time?,” researchers found that, in fact, when elite scientists die, younger and lesser-known scientists are more likely to introduce novel ideas that push the field forward. Planck was right.

Older people tend to have deeper expertise in any given domain, and their contributions are not to be cast aside. But innovation requires something orthogonal to expertise—a kind of useful naïveté—that is more common among the young. America’s creeping gerontocracy across politics, business, and science might be constricting the emergence of new paradigms.

3. The rise of “vetocracy”

What if it’s not American creativity that’s suffering, but rather that modern institutions have found new successful ways to thwart and constrict creativity, so that new ideas are equally likely to be born, but less likely to grow?

In his “Build” essay, Andreessen blasted America’s inability to construct not only wondrous machines such as supersonic aircraft and flying cars but also sufficient houses, infrastructure, and megaprojects. In a compelling response, Ezra Klein wrote that “the institutions through which Americans build have become biased against action rather than toward it.” He continued:

They’ve become, in political scientist Francis Fukuyama’s term, “vetocracies,” in which too many actors have veto rights over what gets built. That’s true in the federal government. It’s true in state and local governments. It’s even true in the private sector.

Last year, fewer bills were passed than in any year on record. From 1917 to 1970, the Senate took 49 votes to break filibusters, or less than one per year. Since 2010, it has had an average of 80 such votes annually. The Senate was once known as the “cooling saucer of democracy,” where populist notions went to chill out a bit. Now it’s the icebox of democracy, where legislation dies of hypothermia.

Vetocracy blocks new construction too, especially through endless environmental and safety-impact analyses that stop new projects before they can begin. “Since the 1970s, even as progressives have championed Big Government, they’ve worked tirelessly to put new checks on its power,” the historian Marc Dunkelman wrote. “The new protections [have] condemned new generations to live in civic infrastructure that is frozen in time.”

The best objection to everything that I’ve written so far is that there exists a world where young people tend to be in control, where regulatory burdens aren’t blocking megaprojects, and where new ideas are generally cherished and even, perhaps, fetishized. It’s the internet—or, more specific, the software industry. If you are working on AI, or crypto, or virtual reality, you probably aren’t starved for new ideas. You very well may be drowning in them.

Undeniably, the communications revolution has been the most significant fount of new ideas in the past half century. But the vitality of the tech industry in comparison with other industries points up that the U.S. innovation system has devolved from variety to specialization in the past 40 years or so. The U.S. used to produce a broad diversity of patents across many industries—chemistry, biology, and so forth—whereas patents today are more concentrated in a single industry, the software industry, than at any other time on record. We’ve funneled treasure and talent into the world of bits, as the world of flesh and steel has decayed around it. In the past 50 years, climate change has worsened, nuclear power has practically disappeared, construction productivity has slowed down, and the cost of developing new drugs has soared.

[Read: How immigrants have contributed to American inventiveness]

What I want is for the physical world to rediscover the virtue of experimentation. I want more new companies and entrepreneurs, which means I want more immigrants. I want more megaprojects in infrastructure and more moon-shot bets in energy and transportation. I want new ways of funding scientific research. I want non-grifters to find ways to innovate in higher education to bend the cost curve of college inflation. I want more prizes for audacious breakthroughs in cancer and Alzheimer’s and longevity research. As strange as this might sound, I want the federal government to get into the experimentation game too and found new agencies that identify and solve the problems that will be created by this riot of newness, as the CDC and DARPA once did. And, finally, I’d like Hollywood to rediscover a passion for cinematic blockbusters that don’t have numbers in the title.