Oh, how far we've come since the days of Will Smith eating spaghetti. While AI-generated images have become increasingly realistic in recent years, video was always lagging several paces behind. But something seems to have shifted lately, both in the technology and myself.Earlier this week I was scrolling through Creative Bloq's Twitter (sorry, X) feed, which is invariably filled with non-sensical slop – such is the algorithm since Elon Musk took over. But then I watched one video that horrified me. It was a seemingly candid smartphone video featuring (strap in, guys) a girl in a field swinging a golf club at a golf ball, and driving it straight into the head of a duck. As the girl laughs, the duck flaps around in agony and confusion before falling into a lake and floating away, presumably to a slow and painful end.

I truly felt sorry for this duck. And then I felt angry at the girl. And then I looked through the comments to see who felt the same. This was when I discovered it was an AI-generated video. For the first time, I had fallen for one. I had been rage-baited by AI.But of course, it isn't just me. If I'm falling for stupid duck videos, who else out there, particularly if they aren't particularly tech or internet savvy, is being deceived by AI videos. The answer is, almost definitely, a great deal of people. Last year, a study of over 2,000 people found that only 0.1% of participants could consistently tell real content from deepfakes when shown a mix of images and videos. And a few months ago, an experiment by the London School of Economics suggested the average person identifies fake videos correctly only about 60% of the time. That's barely better than chance. And those numbers are only going to go up. Generative AI video technology is improving at an alarming rate. Last month, an allegedly AI-generated 'movie clip' of Tom Cruise and Brad Pitt fighting went viral and became the subject of several column inches, simply because of how believable it looked. Indeed, so polished was the clip that the New York Times claimed it had spooked Hollywood.

This was a 2 line prompt in seedance 2. If the hollywood is cooked guys are right maybe the hollywood is cooked guys are cooked too idk. pic.twitter.com/dNTyLUIwAVFebruary 11, 2026

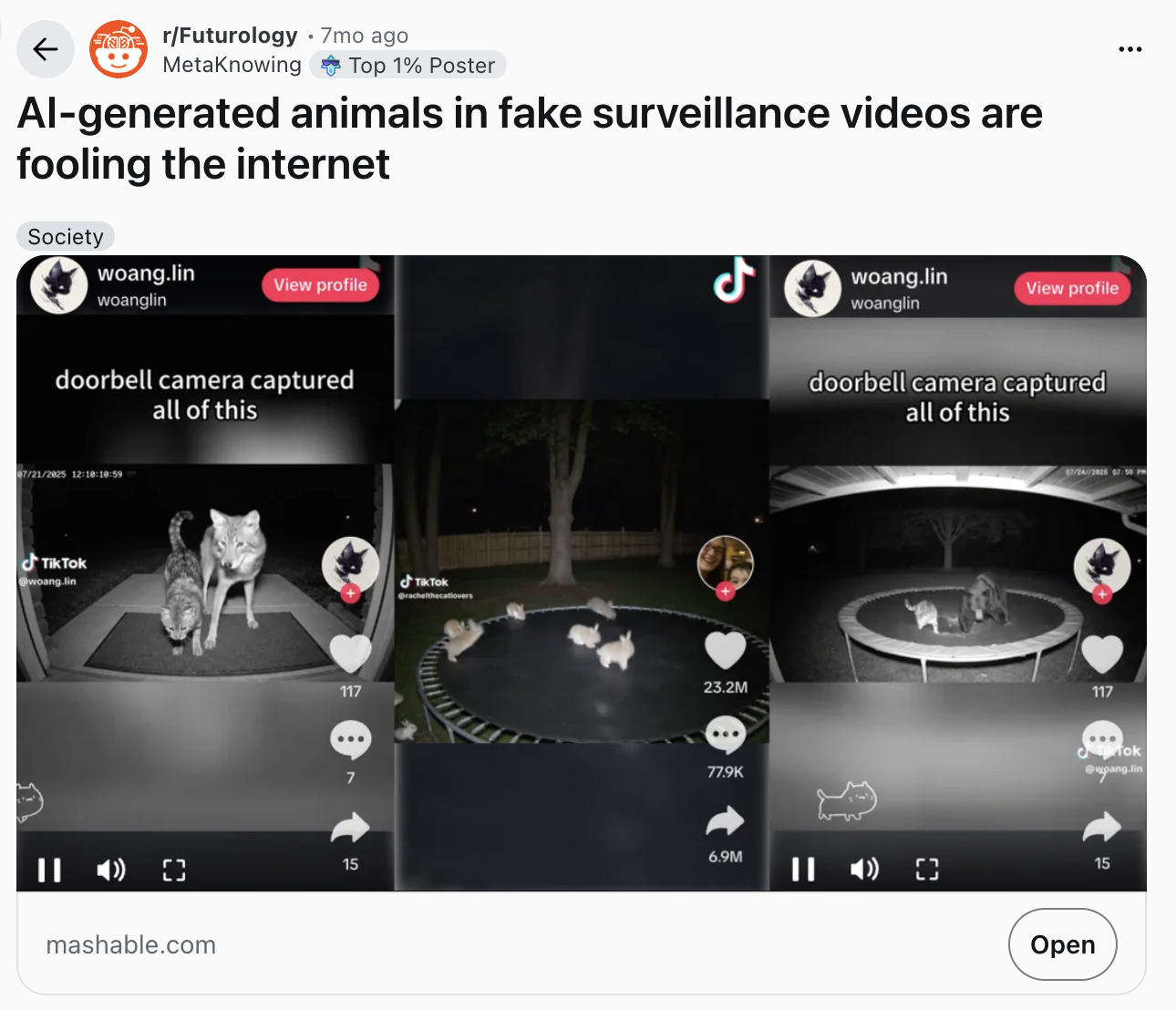

Claims that the clip was crated from a "single prompt" in Seedance may have been debunked (apparently actors and green screen may have been involved), but that doesn't change the fact that enough people believed it was possible that this was an AI-generated video made from mere prompts that it took over the internet for several days. That, surely, shows how far we've come. But while genuinely AI-generated Hollywood-quality clips might still be a while away, it's the lo-fi stuff like that bloody duck that worries me. AI-generated animal videos, fake CCTV clips, and hyper-realistic “caught on camera” moments are becoming increasingly ubiquitous on social media – and these are the kinds of quick, low-quality videos that can provoke a reaction and go viral before anybody stops to question them.

It's when AI starts to blend seamlessly into the visual language of the internet—shaky phone footage, grainy security camera shots, TikTok edits, livestream clips—that it becomes truly insidious. This is the kind of content that isn't supposed to look perfect, which means the AI doesn't have to be flawless to fool idiots like me. But it's one thing falling for a video of a duck getting hit by a golf ball, and another to fall for fake war footage.We've published advice on how to spot AI-generated images, but video is a whole different ball game. Without the ability to zoom in and inspect pixels and details, we're forced to rely more on instinct and 'vibes'. But there are a few things to look out for.

Firstly, always take a look at the source of the video. If you're only seeing it coming from one creator, particularly if their account looks generic or doesn't have a huge amount of followers, there's a chance they might be pumping out AI content. Real clips tend to go viral quickly – and get shared by many people.Secondly, watch out for narrative bait. Perfectly 'formulaic' stories designed to elicit an emotional response (animal rescues, celebrity dramas, shocking CCTV footage), especially if they happen to be captured from the perfect angle, might be too 'good' to be true. And always listen to the audio. This is one area of AI that's lagging behind – cloned voices often don't sync up properly, or feature flat or robotic tone. And if the clip features no sound effects, with only a music track plastered over it, ask yourself why. Maybe the sound effects never existed.In short. the time has come to start treating viral videos the way we used to treat chain emails; entertaining, occasionally convincing, and very possibly nonsense. Because it seems the next stage of AI realism isn’t Hollywood-level special effects, it's a blurry video filmed on a stranger's phone.